Last fall I watched a junior marketer on my team ship a “perfect” AI-written email sequence in 22 minutes. It was technically flawless…and it landed like cardboard. That tiny flop became my 2026 wake-up call: AI can scale output, sure, but strategy is still a human job. This guide is how I’d build a Marketing AI strategy now—equal parts ambition, measurement, and a little healthy suspicion.

1) My 2026 marketing trends gut-check: AI everywhere, value nowhere?

My 2026 gut-check is simple: AI is everywhere, but real marketing value is still uneven. After working through ideas from The Complete Marketing AI Strategy Guide, I stopped treating AI like a shiny tool I “try” and started treating it like plumbing. It should be embedded across the work: creative drafts, campaign planning, reporting, and even vendor workflows like contract reviews and negotiation prep. If it’s not built into the system, it becomes another tab open—another distraction.

Where AI helps me ship faster vs. where it makes more noise

I now map every “AI-driven marketing” promise into two buckets: speed or noise. This is a painful distinction, because many AI marketing tools look impressive in a demo but add steps in real life. I’m not chasing “AI content” or “AI personalization” as labels. I’m chasing fewer handoffs, fewer revisions, and fewer meetings that exist only to fix avoidable mistakes.

- Speed: first drafts, variant testing ideas, brief outlines, QA checklists, tagging and summarizing research, contract clause comparisons.

- Noise: auto-generated campaigns with weak positioning, dashboards that don’t change decisions, “insights” that repeat what I already know.

My rule for 2026: cycle time or it doesn’t count

I set one rule and I stick to it: if it doesn’t reduce campaign production cycles or rework, it’s a demo—not a strategy. That means I measure AI by practical outcomes like:

- How many days it removes from concept-to-launch

- How many review rounds it prevents

- How often it catches errors before they hit production

“If AI doesn’t change the timeline, it won’t change the business.”

AI is a sous-chef, not the head chef

The analogy that keeps me grounded: AI is a sous-chef. It can prep ingredients fast—summaries, options, drafts, variations. But I still decide the menu and the vibe: the positioning, the promise, the trade-offs, and what we will not do.

A quick lesson from competitive research

The first time I used AI summaries for competitive research, I got 10X breadth in a day. It felt like a breakthrough—until I realized I had to double-check every conclusion. The summaries were great at coverage, but shaky on nuance. Now I use AI to scan wide, then I verify the claims that will shape strategy.

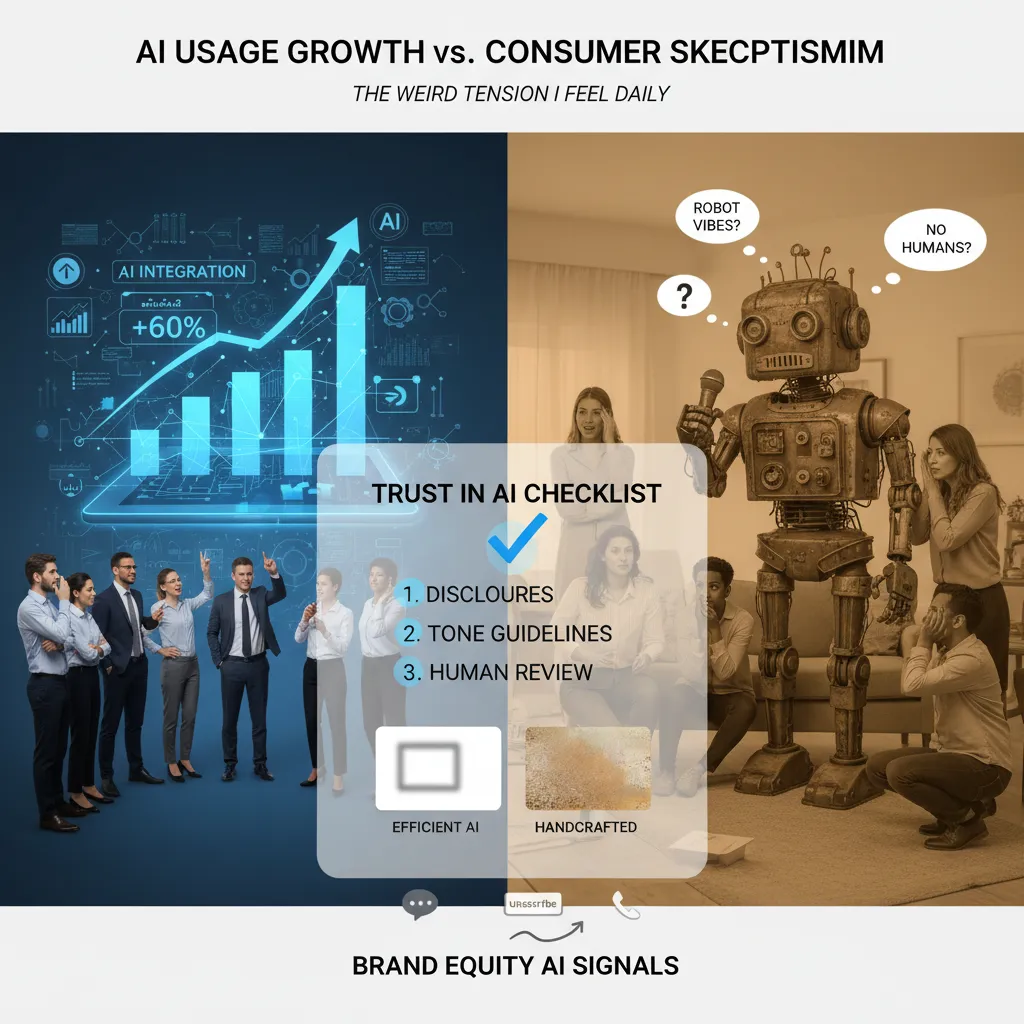

2) AI usage growth vs AI skepticism consumers (the weird tension I feel daily)

Inside my company, I plan for AI usage growth because it’s the only way to keep up with content volume, speed, and personalization. But outside my company, I assume the audience is getting more sensitive to “robot vibes.” I feel that tension daily: the same tools that help me ship faster can also make my marketing feel generic, over-polished, or oddly confident in a way humans usually aren’t.

My “trust in AI” checklist (so speed doesn’t break credibility)

From The Complete Marketing AI Strategy Guide, my biggest takeaway is that AI is not just a production tool—it’s a trust variable. So I use a simple checklist before anything goes live, especially brand-defining assets like homepages, pricing pages, founder emails, and flagship ads.

- Disclosure rules: When AI meaningfully shaped the message, I add a clear note (not a legal essay). Example:

Drafted with AI, reviewed by our team. - Tone guidelines: No hype, no fake certainty, no “as an AI” language, and no generic empathy lines.

- Human review step: A real person must approve claims, examples, and anything that could affect brand trust.

I A/B test “efficient AI” vs “handcrafted” and watch brand equity signals

I often create two versions of the same asset: one that leans into AI efficiency (shorter, cleaner, more structured), and one that’s intentionally handcrafted (more opinion, more texture, more human edges). Then I track performance and trust signals:

- Comment sentiment (people calling it “salesy,” “generic,” or “written by a bot”)

- Unsubscribes and spam complaints

- Sales calls: “I liked your perspective” vs “I saw your ad everywhere”

One intentionally non-optimized channel as my authenticity benchmark

I keep a small newsletter that I don’t fully optimize. No heavy automation, no aggressive personalization, no endless subject line testing. It’s my baseline for what my voice sounds like when I’m not chasing metrics. If my main channels drift too far from that feel, I pull them back.

Scenario planning: when a competitor gets roasted for obvious AI content

I assume it will happen: a competitor ships clearly AI-generated posts, customers screenshot it, and the comments turn brutal. I pre-write my response plan:

- Acknowledge the moment without dunking on them.

- Re-state our standard: human review, clear disclosures, and accuracy checks.

- Offer a simple proof point (process, examples, or policy) instead of defensiveness.

My goal isn’t to hide AI. It’s to make sure AI never becomes the loudest voice in my brand.

3) Synthetic data sources: my favorite idea… that I don’t fully trust yet

Synthetic data is one of my favorite tools in a modern Marketing AI stack, mostly because it helps me move when my first-party data is thin, messy, or trapped in approvals. If legal review, data access tickets, or platform limits slow me down, I’ll use synthetic data sources to run scenario modeling: “What if CPC rises 20%?” “What if conversion rate drops on mobile?” “What if we shift budget from search to retail media?” It’s fast, cheap, and great for exploring options before I ask anyone for budget.

My “synthetic data balance” rule

I keep a simple rule that protects me from getting too excited: synthetic helps me ask smarter questions; real data decides the budget. In practice, I treat synthetic outputs like a draft plan, not a forecast. It can show me where to look, what to test, and which levers matter most. But I won’t lock spend based on simulated performance alone.

- Synthetic data = direction, hypotheses, scenario ranges

- Observed data = final targets, budget, and performance claims

I document what was simulated vs. observed

This sounds boring, but it saves me later. I label every chart, dashboard, and model output with what was simulated and what was measured. Otherwise, my future self (or a stakeholder) can accidentally treat guesses like facts.

| Field | How I label it |

|---|---|

| Inputs | Observed / Assumed / Synthetic |

| Outputs | Scenario estimate (not actual) |

| Decision use | Explore / Test / Fund |

The first time synthetic data “proved” I would win… I didn’t

Quick aside: the first time synthetic data “proved” my campaign would beat the control, the real world politely disagreed. The model assumed stable intent and clean attribution. Reality gave me seasonality, creative fatigue, and a tracking gap. Now I stress-test assumptions before I trust any simulated lift.

When synthetic results look too perfect, I assume my assumptions are too fragile.

I pair synthetic modeling with targeting audits

Synthetic data can also hide bias. If the simulated audience or conversion behavior reflects skewed inputs, the model may amplify unfair targeting. So I pair scenario modeling with marketing data targeting audits: I check who gets included, who gets excluded, and whether performance “wins” come from narrow segments that don’t match my brand goals.

4) The $200M AI mention tracking trap (and what I measure instead)

I’ve watched teams obsess over AI mention tracking dashboards that can’t even agree on what “a mention” is. One tool counts a brand name in an AI answer. Another counts a link. A third counts “implied” references. Then leadership asks why the numbers don’t match, and suddenly the marketing AI strategy turns into a debate about definitions instead of outcomes.

In The Complete Marketing AI Strategy Guide, the big lesson I keep coming back to is simple: measurement should match the decision. AI mention tracking can be useful, but I treat it as a directional signal, not a KPI worth betting a quarter on. If mentions rise after we improve content quality and distribution, great. If they fall, it’s a prompt to investigate. But I don’t tie budgets, headcount, or performance reviews to a metric that shifts based on model updates and vendor logic.

What I measure instead: operational ROI metrics

Instead, I build a measurement stack around operational metrics that show real ROI in marketing operations:

- Cycle time: How long from brief to publish (or brief to launch)?

- Rework rate: How many rounds of edits, rewrites, or compliance fixes?

- Creative throughput: How many usable assets per week per team?

- Specialist hours saved: Time returned to SEO, design, analytics, and PMs.

These are boring on purpose. They’re also hard to fake. When AI actually helps, these numbers move in a way finance understands.

My “ROI at scale” check

I also run what I call an ROI at scale check: can the workflow still work when I triple volume or add regions? A pilot that looks amazing at 20 pieces a month can break at 200 when you add localization, legal review, and multiple product lines.

| Test | Question I ask |

|---|---|

| Volume | Does quality hold when output triples? |

| Regions | Can we support new languages and approvals without chaos? |

| People | Do we need more experts, or did we truly save hours? |

Small confession: I once green-lit a shiny monitoring tool because the sales deck had pretty graphs. Never again.

Now, I still track AI mentions, but I keep it in the “signal” lane. My core marketing AI measurement is about speed, waste, and capacity—because that’s where strategy becomes repeatable.

5) Agentic AI trends and AI-native decision-making (the part that scares me a little)

In The Complete Marketing AI Strategy Guide, the big shift isn’t just “use AI for content.” It’s agentic AI: systems that can plan steps, run tasks, and move work forward with less prompting. That’s exciting—and a little scary—because marketing is full of small decisions that add up fast.

Where I use agents (and where I don’t)

I experiment with AI agents for repetitive marketing tasks that are easy to review:

- Drafting a campaign brief from notes

- Generating ad and email variants for testing

- Running QA checks (broken links, missing UTM tags, brand terms)

But I keep approvals human. Anything that changes spend, claims, pricing, or brand voice needs a person to sign off. My rule: agents can prepare work; humans publish it.

Designing AI-native decision flows

I’ve started mapping decisions into two buckets: what the system can recommend vs. what it can execute. This helps me avoid “silent automation” that surprises the team.

| Decision type | Good for AI to do | Keep human |

|---|---|---|

| Optimization | Suggest budget shifts based on CPA/ROAS | Approve budget moves above a set threshold |

| Messaging | Propose variants aligned to a brief | Approve claims, tone, and sensitive topics |

| Targeting | Recommend audience segments to test | Approve exclusions and compliance rules |

Why I prefer vertical tools over generic bots

“Marketing” is too messy for one-size-fits-all agents. I look for verticalized solutions—tools built for specific workflows like paid search QA, lifecycle email ops, or product feed cleanup—because they come with guardrails, logs, and clearer failure modes.

Preparing for the AI shopping assistant era

If an AI shopping assistant is summarizing my product page, I want it to summarize the right things. I write pages with clean structure: clear specs, plain-language benefits, pricing context, shipping/returns, and comparisons. I keep persuasion for humans, but I make facts easy for agents to extract.

Wild-card: if my brand hired an agent like an intern

“Day one training is not prompts—it’s boundaries.”

- Our brand rules: what we never claim, and words we don’t use

- Our customer truth: top pains, top objections, top use cases

- Our escalation policy: when to stop and ask a human

6) My “unsexy” 30-day Marketing AI strategy rollout plan

If you’re reading “2026 Marketing AI Trends” and hoping for a magic tool that fixes everything, I get it. But the approach I use (and the one I keep coming back to from The Complete Marketing AI Strategy Guide) is simple: start small, make it measurable, and protect the brand. Here’s my practical 30-day Marketing AI strategy rollout plan—the kind that feels boring in the moment, but pays off fast.

Week 1: Inventory AI tool adoption (what we use, what we pay, what’s duplicative)

In week one, I do a full inventory of every AI tool touching marketing: writing assistants, design tools, SEO platforms, chatbots, analytics add-ons, and “free trials” that quietly became subscriptions. I list who uses what, what it costs, and what problem it claims to solve. Then I look for overlap. In most teams, we’re paying for three tools that do the same thing, and nobody owns the decision. This week is about clarity, not judgment.

Week 2: Pick two workflows for AI marketing operations (not everything at once)

Week two is where I get strict: I choose only two workflows. My default picks are creative diagnostics AI (to spot what’s working in ads and landing pages) and reporting summaries (to turn performance data into clear weekly narratives). These are “operations” wins—less debate, faster cycles, fewer hours lost. I avoid big, risky changes like full-funnel automation until the team trusts the process.

Week 3: Install measurement (operational metrics, ROI, and guardrails)

In week three, I set measurement rules so AI doesn’t become a productivity myth. I track operational metrics like time-to-first-draft, revision count, and campaign turnaround time. I also set ROI expectations and marketing measurement guardrails: what data can be used, what must be anonymized, and what needs human review before it ships.

Week 4: Publish a brand authenticity AI playbook + run one pilot campaign end-to-end

Week four is about trust. I publish a simple brand authenticity AI playbook: tone rules, review steps, and disclosure guidance. Then I run one pilot campaign end-to-end using the new workflows and measurement. One campaign is enough to learn what breaks, what improves, and what needs policy.

Tiny tangent: I always schedule a boring meeting called Data silos resolution. It’s not fun, but future me always thanks present me when the data finally connects and the AI outputs stop guessing.

TL;DR: For 2026 marketing trends, I’m treating AI as invisible infrastructure: streamline AI marketing operations, use synthetic data sources carefully, measure ROI by cycle time and rework, optimize for AI shopping assistants, and protect trust in AI with real brand authenticity.