Last spring I watched a perfectly reasonable forecasting project turn into a three-week saga because one PDF spec (yes, a PDF) kept breaking our parser. The turning point wasn’t hiring another hero engineer—it was admitting our workflow needed help: smaller efficient models for the boring bits, retrieval augmented generation for the messy context, and eventually agentic AI systems that could babysit the pipeline while we slept. This post is my attempt to capture the “real results” side of that shift—what actually changed in Data Science Ops, what felt weird, and what I’d do differently if I had to repeat it.

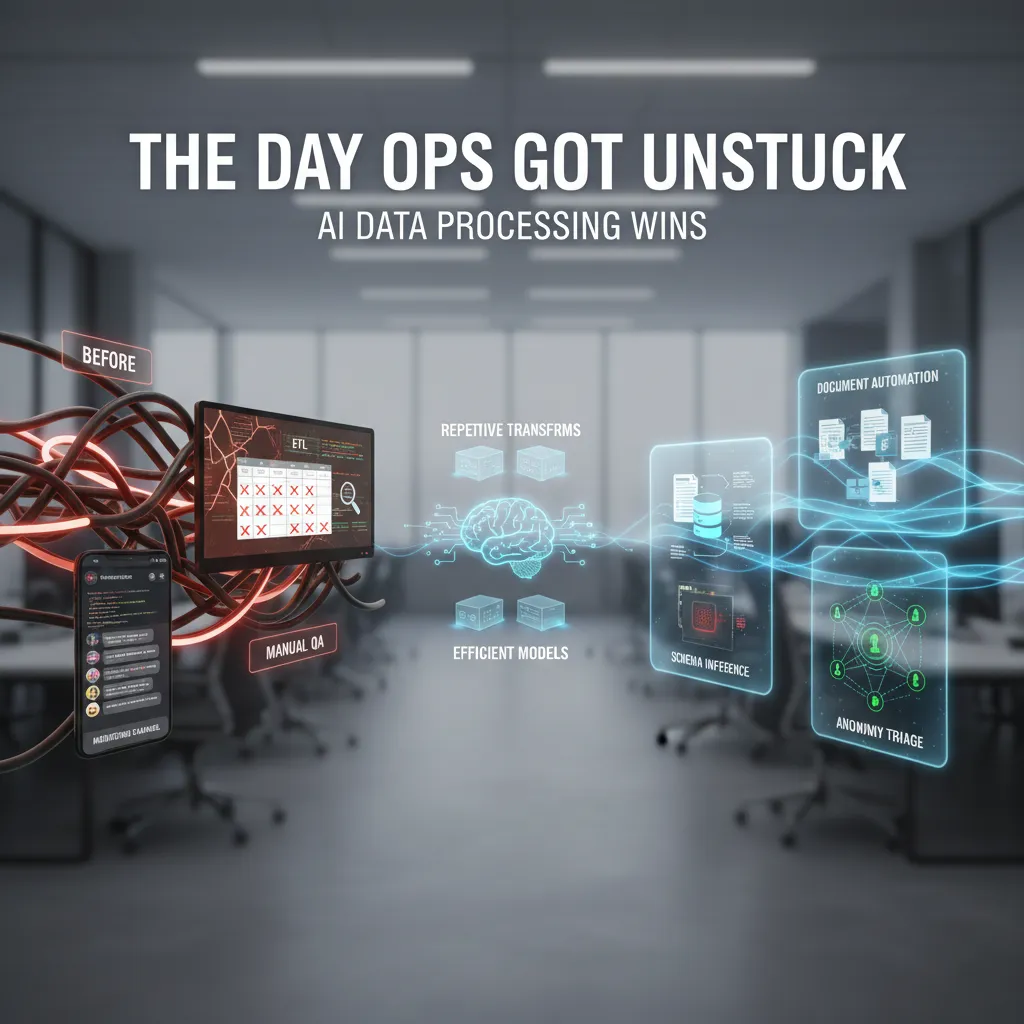

1) The Day Ops Got Unstuck: AI Data Processing Wins

My “before” workflow: brittle ETL, manual QA, and Slack-as-monitoring

Before we brought AI into Data Ops, my workflow was a patchwork of brittle ETL jobs, manual QA checks, and a Slack channel that doubled as our “monitoring tool” (it counts, right?). If a pipeline failed, someone usually noticed because a dashboard looked weird, a stakeholder complained, or a teammate pasted an error log into Slack at 7:12 a.m. The worst part wasn’t the failure—it was the rework: reruns, hotfixes, and long threads trying to figure out what changed.

Where AI data processing actually helped

From the real results I saw in How AI Transformed Data Science Operations, the biggest wins came from using AI in places where humans were doing repetitive interpretation work.

- Document processing automation: AI helped extract fields from messy PDFs, emails, and forms. Instead of writing one-off parsers, we used models to pull structured data and flag low-confidence outputs for review.

- Schema inference: When new data arrived with surprise columns or shifting types, AI-assisted schema inference suggested mappings and transformations. That reduced the “why did this column become a string?” firefighting.

- Anomaly triage: We stopped treating every alert like a five-alarm fire. AI helped group anomalies, suggest likely causes (late upstream feed, null spikes, join explosion), and route issues faster.

A small but real shift: smaller models for repetitive transforms

One change that mattered more than I expected: I stopped burning GPU cycles on tasks that didn’t need it. For repetitive transforms—standardizing categories, cleaning text, simple entity matching—I leaned on smaller, efficient models or even rules + lightweight ML. The result was faster runs, lower cost, and fewer “we can’t deploy this because it’s too expensive” debates.

Wild-card aside: I started naming pipelines like houseplants

I also started naming pipelines like houseplants: Pothos, SnakePlant, Fern. When one dies, you remember it longer. Weirdly, it made incident reviews more human and easier to recall.

What I’d measure first (not vibes)

If you’re adopting AI data processing in 2026, I’d track:

- Cycle time: from data arrival to usable tables

- Rework rate: reruns, manual fixes, and backfills

- Incident frequency: how often pipelines break or alerts escalate

2) From One Big Brain to a Team: Agentic AI Systems in Practice

In 2026, the biggest shift I made in Data Ops was simple: I stopped asking for “a model” and started designing agentic AI systems—plural. The source I leaned on, “How AI Transformed Data Science Operations: Real Results”, made the point clear: the wins came from operational change, not just smarter algorithms. In practice, one “big brain” model was never enough to handle monitoring, testing, documentation, and incident response at the same time.

Agent collaboration: small roles, big reliability

Instead of one agent doing everything, I split responsibilities like a real team. Each agent had a narrow job and clear boundaries:

- Drift Watcher: monitors feature distributions, label delay, and alert thresholds.

- Test Writer: generates and updates data quality checks and model validation tests.

- Rollback Planner: drafts a rollback plan, including what to revert and how to verify recovery.

This setup reduced noise and helped me trace decisions. When something broke, I could see which agent made which call, and why.

Context engineering that made agents less chaotic

Yes, agents can be chaotic. What helped was context engineering—not more prompts, but better structure. I used:

- Fixed system rules (non-negotiables like “never deploy without passing tests”).

- Short, scoped memory (only the last N runs, not months of messy history).

- Tool contracts (what each tool does, inputs/outputs, and failure behavior).

- Shared glossary (one definition of “drift,” “incident,” “severity,” etc.).

I also forced agents to cite the exact metric or log line they used before making a recommendation.

A failure mode: the overconfident Friday deploy

My nightmare scenario was an agent that “felt sure” and deployed on Friday afternoon. We prevented that with a hard rule:

if day == "Friday" and time > "12:00": block_deploy()

But the real fix was layered: the Drift Watcher could recommend, the Test Writer could validate, and only after both passed could the Rollback Planner generate a change ticket for review.

Why autonomy is earned in enterprise Data Ops

In enterprise settings, autonomy is earned through guardrails, evals, and human sign-off. Agents can draft, test, and simulate, but production changes require approvals, audit logs, and repeatable evaluations. That’s how agentic systems stay fast without becoming risky.

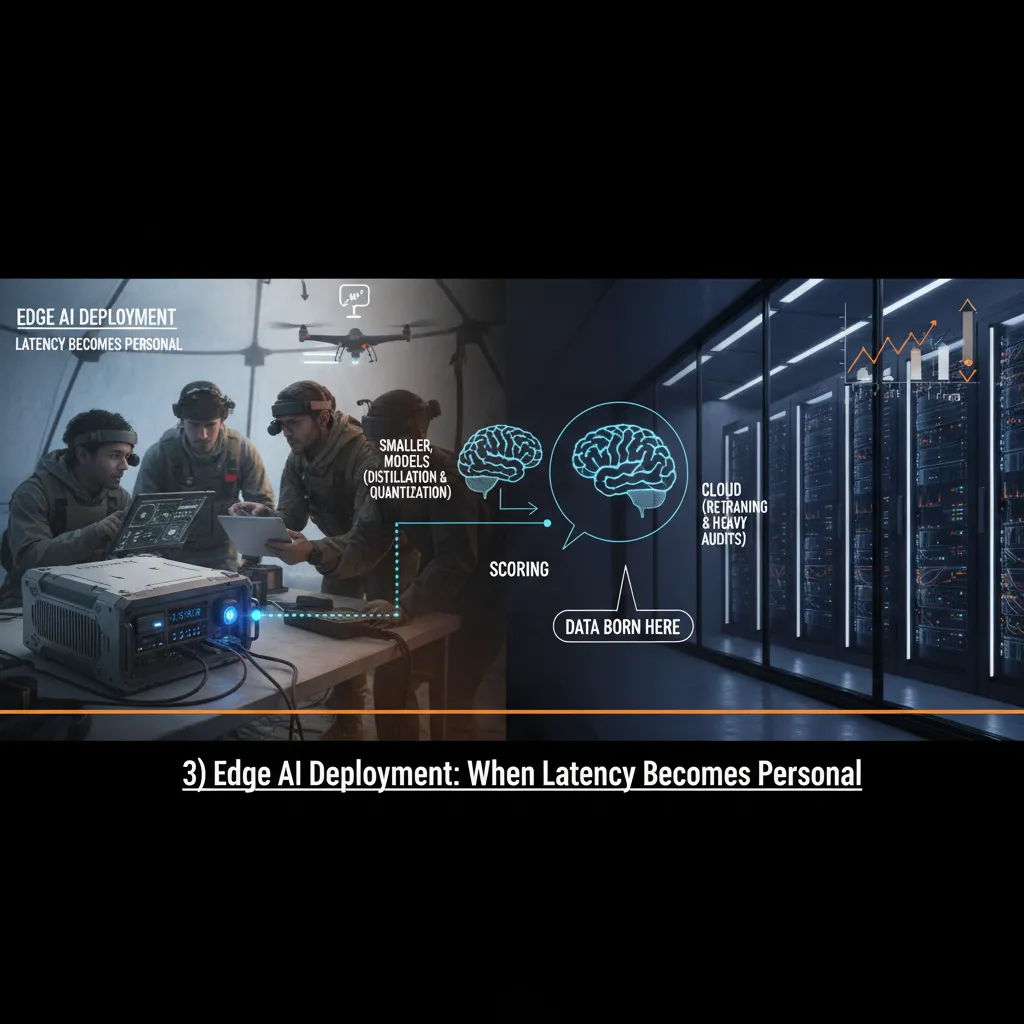

3) Edge AI Deployment: When Latency Becomes Personal

A quick confession: I used to ignore edge AI deployment. I treated it like a “nice to have” for later. Then a field team asked me a simple question: “Why do predictions arrive after the decision was already made?” That one line made latency feel personal. In Data Ops, a model that is accurate but late is still wrong in practice.

What changed: distillation and quantization made edge realistic

What shifted for me in 2026 is how practical edge models have become. With distillation, I can train a smaller “student” model to copy the behavior of a larger “teacher” model. With quantization, I can shrink model size and speed up inference by using lower-precision numbers. The result is simple: smaller, efficient models that run closer to where data is born—on devices, gateways, or local servers—so the prediction shows up while the moment still matters.

An edge-to-cloud split that works in real Data Ops

From the “How AI Transformed Data Science Operations: Real Results” playbook, the pattern I keep seeing is a clean split of responsibilities:

- Edge: fast scoring, basic rules, and immediate actions (low latency, limited compute).

- Cloud: retraining, feature store updates, drift analysis, and heavy audits (more compute, better governance).

I also like to keep a small “fallback” logic on the edge, so if the model fails or the network drops, the system still behaves safely.

Trade-offs I didn’t expect

Edge AI is not just “cloud AI, but smaller.” Debugging is weirder. When something goes wrong, I can’t just open a notebook and replay everything. I need strong telemetry: model version, input stats, latency, battery, temperature, and even network quality. And yes—devices have moods. A model can behave differently when a camera lens is dirty, a sensor drifts, or a device is throttling due to heat.

“If you can’t observe it, you can’t operate it.”

Mini-analogy: the food truck model

Moving AI to the edge is like moving your kitchen into a food truck—less space, faster service, and stricter prep. You pre-pack ingredients (optimized models), keep the menu tight (limited features), and rely on great checklists (monitoring) to serve customers on time.

4) Domain Specific Models + RAG: Accuracy Without the Drama

In our Data Ops work, I learned fast that general AI models can feel smart but slippery. They write clean answers, but when the question includes policy language, finance-y definitions, or internal terms (“active customer,” “booked revenue,” “exception handling”), the model may guess. That’s risky in operations, where one wrong definition can trigger a bad report, a failed audit check, or a broken downstream pipeline.

Why general models struggled in ops reality

General models are trained to be helpful across the whole internet. In practice, that means they often “average” meanings. In Data Ops, we don’t need average—we need our meaning. From the source material on how AI transformed data science operations, the biggest wins came when we stopped treating the model like a universal expert and started treating it like a component in a controlled system.

Domain-specific models: smaller, clearer, easier to test

Domain specific models changed the game for us. They’re usually smaller, tuned on our vocabulary, and easier to evaluate because the scope is narrow. Instead of asking a general model to understand every accounting rule and every data policy, we tune a model to our data contracts, naming rules, and business definitions. That makes accuracy measurable, not vibes-based.

- Less drift: fewer “creative” answers when the question is strict.

- Better evaluation: we can build a tight test set from real tickets and run it weekly.

- Lower cost: smaller models can be faster and cheaper in production.

RAG: the grown-up way to answer with receipts

Retrieval augmented generation (RAG) is how we got answers that come with proof. The model doesn’t just respond—it pulls the relevant policy page, metric definition, or runbook section and cites it. In Data Ops, that “show your work” behavior is everything.

“If the answer can’t point to the source, it’s not an ops answer—it’s a guess.”

Document processing automation: split, route, stitch

We also automated document processing by breaking files into parts, routing each part to the right model, and stitching results back together. For example: tables go to an extraction model, policy text goes to a RAG QA flow, and exceptions go to a classifier that opens the right ticket.

- Chunk documents by section and layout

- Route to specialized models

- Recombine outputs with citations and checks

A tiny rant about permissions

If your data permissions are messy, your AI will be messy—no exception. RAG can only be trusted when access rules are clean, logged, and consistent. Otherwise, the system either leaks data or refuses to answer at the worst time.

5) AI Infrastructure Efficiency: The Unsexy Part That Made Everything Work

I used to think scaling meant “more GPUs.” In practice, the biggest wins in agentic systems in Data Ops came from infrastructure efficiency. It felt less like buying bigger machines and more like gardening: pruning, routing, and timing. When our agents started running more checks, retries, and data validations, the hidden costs showed up fast—latency spikes, noisy queues, and surprise bills.

Smaller Models by Default, Bigger Models on Demand

We stopped treating every task like it needed the “best” model. Most Data Ops work is repetitive: schema checks, anomaly flags, metadata tagging, and simple explanations. So we made small, efficient models the default and only called larger models when the job truly needed deeper reasoning.

“The goal wasn’t to make the smartest agent run every time. It was to make the right agent run at the right cost.”

We used cooperative model routing: a lightweight router model classified the request, then sent it to the smallest model that could meet the quality bar. If confidence was low, it escalated to a larger model.

Scaling Tactics That Actually Moved the Needle

Instead of scaling by brute force, we focused on predictable throughput. These tactics became our baseline:

- Caching for repeated prompts (runbooks, policy text, common SQL patterns).

- Batching small requests together to reduce overhead per call.

- Mixed precision inference to speed up compute without breaking accuracy.

- Smart scheduling so background agent tasks ran off-peak, and urgent tasks jumped the line.

One tiny change saved us hours: we stopped letting agents “chat” in loops. We added hard limits and clearer stop conditions:

max_steps=8; stop_on="validated_output"

Open Source Choices That Reduced Lock-In (and My Stress)

We leaned on open source tooling for serving, evaluation, and observability. That meant fewer one-way doors. If a vendor changed pricing or features, we could swap components without rewriting the whole stack. It also made audits easier because we could inspect how requests flowed through the system.

A Practical Budget Story: Spend for Reliability

We didn’t cut costs to zero. We traded spend for reliability: more monitoring, better fallbacks, and capacity reserved for peak pipelines. The result was fewer failed runs and less firefighting. In Data Ops, that stability is the real ROI—even if it’s the least glamorous part of AI trends in 2026.

6) The Weird Future Bit: AI Research Acceleration Meets Healthcare Ops

I’m going to take a tangent that isn’t really a tangent: the same Data Ops patterns I see in modern data science teams are showing up in labs and clinics. In the source story on how AI transformed data science operations, the big wins came from tightening the loop between ideas, data, experiments, and deployment. That loop is basically the scientific method with better tooling. When I look at healthcare operations and AI research acceleration, I see the same need for clean inputs, traceable decisions, and fast feedback.

AI research acceleration is becoming an ops problem

In 2026, “agentic systems in Data Ops” won’t just automate dashboards. They’ll draft hypothesis statements, propose experiment plans, and keep a running lab notebook. I’m already seeing early versions of an AI lab assistant workflow: it reads prior results, suggests the next test, checks whether the data is good enough, and flags missing controls. The weird part is how normal this feels once you treat research like production: versioned datasets, reproducible runs, and approvals before anything touches a shared environment.

Healthcare AI is moving past diagnostics

Diagnostics got the headlines, but healthcare AI applications are shifting into symptom triage and treatment planning. That’s where operations matter most. A triage agent has to ask the right follow-up questions, route a case to the right clinician, and document why it made a recommendation. A treatment planning agent has to align with guidelines, patient history, and real-world constraints like drug availability. If Data Ops taught me anything, it’s that the model is only one piece; the workflow, monitoring, and audit trail are what make it safe enough to use.

Hybrid quantum computing: maybe soon for molecular modeling

I’m cautiously curious about hybrid quantum computing as a helper for molecular modeling. I don’t think it replaces classical systems in the near term, but I can imagine a “quantum-assisted” step inside a larger pipeline: generate candidate molecules, score them, and feed results back into the experiment planner. If it works, it will still need the same ops backbone: data lineage, reproducibility, and clear handoffs between tools.

Here’s my closing thought experiment: what if your data science team looked like a small scientific lab? You’d have agents that propose hypotheses, run controlled tests, log outcomes, and escalate uncertain cases. In that world, Data Ops isn’t just support—it’s the operating system for discovery and care.

TL;DR: AI transformed data science operations when we stopped chasing one giant model and started building systems: smaller efficient models + model routing systems, RAG for accuracy, edge AI deployment for latency, and agentic AI systems for end-to-end workflow automation—backed by a growing open source AI ecosystem and more infrastructure efficiency.