I still remember the first time a model I was proud of died in production—not because the algorithm was wrong, but because a single upstream field quietly changed from integers to strings over a weekend. That facepalm moment turned into a personal rule: in data science, the “boring” stuff (Data Quality Management, Data Governance, and Data Security Privacy) is the stuff that keeps you employed. In this post I’m unpacking 39 data science tips I wish someone had taped to my monitor—plus a few 2026-era Data Science Trends (Edge AI, Synthetic Data, AI Agents, and Quantum Computing) that are already sneaking into normal workweeks.

1) The “oops” list: 9 habits that save projects

Most data science failures I’ve seen were not “bad modeling.” They were oops moments: the data changed, the meaning drifted, or nobody remembered why we made a key choice. These nine habits keep me honest and save projects before they get expensive.

Start with data sourcing checks (before any model)

- Schema check: I confirm column names, types, and units match what I expect.

- Freshness check: I verify the latest records are actually recent (and not stuck in a pipeline).

- Missingness scan: I measure nulls by column and by segment, not just overall.

Write one page of assumptions (and revisit monthly)

I keep a single page called Model Assumptions: what must stay true for the model to work. Examples: “prices are in USD,” “labels arrive within 7 days,” “user IDs are stable.” I review it every month, because reality changes faster than code.

Track data quality with a tiny scorecard

I use a small data quality scorecard so issues show up early, not during a fire drill.

| Metric | What I check |

|---|---|

| Completeness | % non-null for key fields |

| Validity | Ranges, allowed values, type rules |

| Timeliness | Lag between event time and arrival |

| Uniqueness | Duplicate IDs and duplicate rows |

Add a pre-mortem (my favorite awkward meeting)

“How will this fail in production?”

I ask the team to list failure modes: drift, broken joins, new categories, latency, privacy limits, and human workarounds.

Keep a decision log for dropped features

- I record what I removed and why (leakage, instability, cost, fairness risk).

- I include a quick note like

drop: device_id (high churn, unstable mapping).

2) Data Science Trends 2026 I’m actually planning for

When I look at data science trends 2026, I’m not chasing hype. I’m choosing moves that reduce latency, improve privacy, and make teams faster without losing control. These are the four shifts I’m actively planning around.

Edge Revolution: moving predictions closer to devices

I’m pushing more inference to the edge (phones, sensors, gateways) instead of routing everything through a big central pipeline. The win is simple: faster decisions, lower bandwidth costs, and fewer raw data transfers.

- Use edge AI when milliseconds matter (quality checks, safety alerts, personalization).

- Keep training centralized, but ship small models for inference.

- Plan for drift: edge models need monitoring and safe rollback.

AI Agents: orchestration for repetitive analytics (with guardrails)

I’m treating agentic AI as an orchestration layer for tasks like data pulls, metric checks, and draft insights. But I’m strict about guardrails because agents can “do” things, not just “say” things.

- Limit permissions (read-only by default).

- Require approvals for writes, deletes, or customer-facing outputs.

- Log everything for audit and debugging.

My rule: agents can automate work, but they can’t own accountability.

Synthetic Data: the least-worst option

I use synthetic data when privacy rules block access, real data is scarce, or classes are badly imbalanced. It’s not “free accuracy,” but it can unblock testing and model development.

- Validate with holdout real data whenever possible.

- Check for leakage and unrealistic patterns.

Quantum Meets Cloud: what I watch vs. ignore

I’m watching cloud quantum services for optimization and simulation experiments, plus hybrid workflows. What I’m ignoring (for now): promises of near-term quantum advantage for everyday ML training. Until it’s repeatable and cost-clear, it stays in the lab.

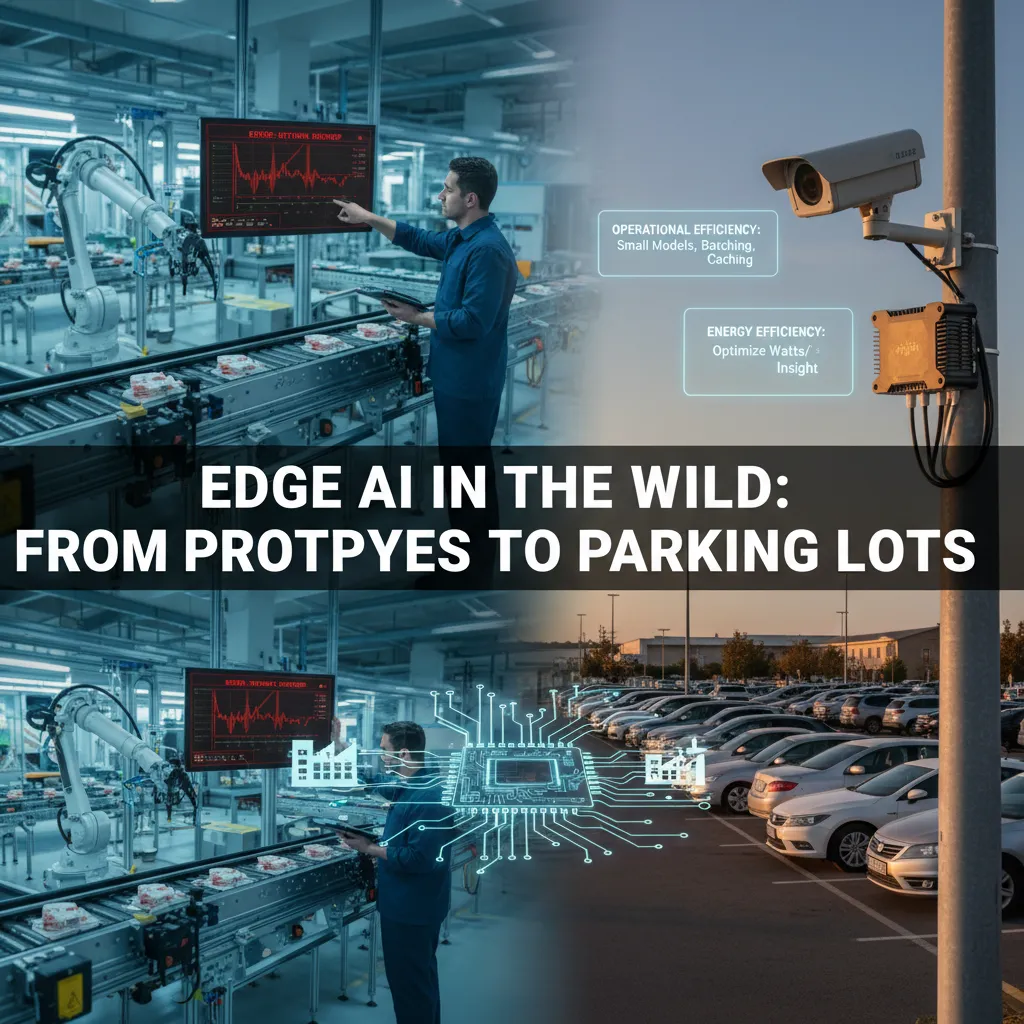

3) Edge AI in the wild: from prototypes to parking lots

Edge AI feels easy in a lab demo. Then you deploy it in a factory, a parking lot, or a retail aisle and learn the hard truth: the network drops (yes, it happens). One of my favorite real Edge AI examples is anomaly detection on a factory line. Cameras and sensors keep streaming, but Wi‑Fi can be spotty near heavy machines. If your model needs the cloud to decide, you miss defects. So I design the pipeline to run locally, store events, and sync later.

Operational efficiency before “just add GPUs”

When latency and cost matter, I start with smaller models and smarter data flow. I’ve seen teams burn weeks tuning accuracy while ignoring the basics that make edge deployments stable.

- Choose smaller models (quantized, pruned, or distilled) that fit device limits.

- Batching when possible: process frames in small groups instead of one-by-one.

- Caching results for repeated states (same scene, same reading) to avoid rework.

Energy efficiency: watts per insight

My rule of thumb is simple: optimize watts per insight, not accuracy alone. A 1% accuracy gain is not worth doubling power draw if it drains batteries or overheats enclosures. I track energy like a first-class metric alongside precision/recall and latency.

“If the device can’t run all day, the model isn’t production-ready.”

Model hosting at the edge: updates are not guaranteed

Edge model hosting needs discipline. I treat every device like it might never update again.

- Versioning: embed model + data schema versions in every prediction payload.

- Rollbacks: keep the last known good model on-device.

- Offline safety: define behavior for stale models and delayed sync.

decision = local_model(x) if online==False else hybrid_model(x)

4) Synthetic Data without the fairy dust (and with receipts)

I use synthetic data when it solves a real problem, not when it just makes a dashboard look bigger. In my workflow, I reach for it in three cases: class imbalance (one label is tiny), privacy constraints (I can’t move raw records), and rare events—the “nothing ever happens” problem where failures, fraud, or edge cases barely show up.

When I reach for synthetic data

- Class imbalance: I generate more minority examples so the model learns the pattern, not just the majority.

- Privacy constraints: I use synthetic rows to share structure without exposing people.

- Rare events: I simulate the few scenarios that matter most, like outages or safety incidents.

Clean, ethical data (with receipts)

If I can’t explain where synthetic data came from, I don’t ship it. I keep “receipts” in a short data card: provenance, generation method, and limits.

- Provenance: what real data trained the generator, and what was excluded.

- Method: rules, bootstrapping, GAN/VAE, agent-based sim, etc.

- Not for: decisions about individuals, fairness claims, or estimating real-world rates.

Synthetic data can protect privacy, but it doesn’t erase responsibility.

Bias detection: don’t amplify the wrong signals

I test whether synthetic augmentation makes shortcuts stronger (like learning “location” as a proxy for “risk”). I compare feature importance, error slices, and subgroup metrics before vs. after adding synthetic data.

Practical tip: keep a real-only evaluation set

I always hold out a real-only test set so I’m not grading my own homework. A simple rule:

train = real + synthetic

validate = real

test = real_only

5) Data Governance that doesn’t feel like punishment

I used to think data governance meant big committees and endless docs. Now I treat it like product hygiene: small, clear rules that help teams move faster with fewer surprises.

Start small: 10 critical fields, not 10,000

For practical data governance, I begin with the 10 fields that drive revenue, risk, or reporting (like customer_id, order_status, net_revenue). For each one, I define three things: an owner, a plain-English definition, and access rules. That’s it. Once those are stable, expanding is easy.

- Owner: who approves changes and answers questions

- Definition: what the field means (and what it does not mean)

- Access: who can view, query, export, or share

Data + AI governance: make audits boring

For data AI governance, I connect model decisions to data lineage: which tables, versions, and transformations fed training and inference. When an audit happens, I don’t want “archaeology.” I want a traceable path from prediction → features → source data → owner.

Good governance turns “Why did the model do that?” into a searchable answer.

My take on data mesh (works great… until it doesn’t)

I like data mesh and decentralized, federated governance because domain teams move quickly and feel ownership. It works great… until definitions drift. My compromise: domains own data products, but shared fields (customer, revenue, identity) follow a central standard and review.

Security as default: least privilege + logging

For data security, I use least privilege and strong logging. I also treat privacy reviews as a normal sprint task, not a last-minute blocker.

- Role-based access + time-bound approvals

- Query and export logs for sensitive datasets

- Privacy checks in the definition of done

6) AI Agents & Autonomous Workflows: my “intern rule”

When I build agentic AI systems, I follow my “intern rule”: an agent can move fast, but it should not be the final decision-maker. I treat it like a smart intern—great at first drafts, great at sorting noise, and always ready to ask for review.

Agentic AI use case: data quality triage (fast, not final)

My favorite practical use case is triaging data quality alerts. The agent reads monitoring signals, checks recent pipeline changes, and drafts an incident summary. It can group alerts into likely root causes (schema drift, missing partitions, late arrivals) and suggest who to page. But I keep the human on the hook for the final call.

- Agent does: cluster alerts, pull context, draft a timeline, propose next checks

- Human does: confirm impact, approve comms, decide rollback or hotfix

Autonomous workflows: clear boundaries

Autonomous workflows only work when boundaries are explicit. I separate actions into “execute” vs “recommend,” and I write those rules down.

- Execute: open a ticket, attach logs, run read-only queries, notify on-call

- Recommend: backfill data, change thresholds, pause a job, reroute traffic

Explainable AI: plain-language rationale

If an agent action can change customer outcomes (pricing, eligibility, ranking, fraud flags), I require a plain-language rationale. I want a short explanation that a non-technical teammate can understand, plus the key signals used.

“If you can’t explain it simply, the agent can’t ship it automatically.”

Mixture of Experts + open source AI for cheap experiments

Before committing to a vendor, I test with open source AI and a simple mixture of experts setup: one model for summarizing incidents, another for log parsing, and a lightweight router. It keeps costs low while I learn what should be automated—and what should stay human-reviewed.

7) Quantum Computing (plus a reality check) for data folks

When I hear quantum computing, I translate it into a simple question: will this help me solve a real data science problem faster or better? Today, the most relevant areas for data folks are still narrow, but worth understanding.

What problems might matter (and what’s still lab talk)

In my work, quantum ideas show up most often in:

- Optimization: routing, scheduling, portfolio-style tradeoffs, feature selection, and other “best choice under constraints” problems.

- Sampling: some probabilistic modeling and simulation tasks where drawing good samples is the hard part.

What I treat as mostly lab talk (for now): general “quantum speedups” for everyday ML training, or replacing my GPU stack next quarter. Most teams won’t see a clear win without very specific problem structure and careful benchmarking.

Quantum acceleration: how I decide to run an experiment

I only try quantum when I can keep it tiny, time-boxed, and measurable. My checklist:

- Define one metric (runtime, solution quality, cost).

- Build a small baseline (classic solver, heuristic, or GPU/CPU approach).

- Run a cloud quantum or quantum-inspired method on the same toy version.

- Stop after 1–2 days if the signal is weak.

I’ll even write a quick note like success = 5% better objective under same time budget to avoid vague “it’s interesting” results.

Quantum meets cloud: learn without buying anything

Cloud access is the practical entry point. I can explore quantum SDKs, simulators, and limited hardware runs without purchasing specialized gear. That makes it easier to learn the workflow: circuits, constraints, noise, and how results degrade outside ideal conditions.

Career tip: spend 5% of your curiosity budget

Don’t bet your whole roadmap on quantum—bet 5% of your curiosity budget.

I keep my core skills (data quality, modeling, deployment, governance) as the 95%, and use the 5% to stay literate and ready if a real use case appears.

Conclusion: 39 tips, one mindset—build for tomorrow

When I step back from these 39 data science tips, I see one clear pattern: high quality data plus pragmatic governance plus trend literacy will beat “hero modeling” almost every time. Edge AI, MLOps, privacy, and model monitoring all matter, but they only work when the basics are solid. If the data is messy, if no one owns the pipeline, or if we ignore what’s changing in tools and rules, even the smartest model becomes a fragile demo.

I think about the failure story from the intro—the one where a model looked great in a notebook and then fell apart in the real world. Today, I’d catch that earlier. I’d add tests that fail fast when schemas drift or key metrics break. I’d set up monitoring so I can see data quality, latency, and prediction health in production. And I’d make ownership explicit, so it’s clear who fixes what when something goes wrong. That mix of testing, monitoring, and accountability turns surprises into routine maintenance.

To keep myself honest, I use a simple weekly ritual. I pick one small governance fix—maybe a clearer data contract, a tighter access rule, or a better audit trail. I run one quality check—freshness, duplicates, missing values, or label leakage. Then I read one trend item: a short paper, a vendor update, or a new policy note on AI governance. After that, I ship something small, because progress compounds when it’s real.

Data science is like cooking: use fresh ingredients, keep a clean kitchen, and set a timer so you don’t burn the sauce.

That’s the mindset I’m taking forward: build for tomorrow, not just for the next chart.

TL;DR: If you want data science work that survives contact with reality: obsess over Data Quality, design Data Governance early, use Synthetic Data thoughtfully, watch the Edge AI shift, and treat AI Agents like interns—helpful, fast, and occasionally chaotic.