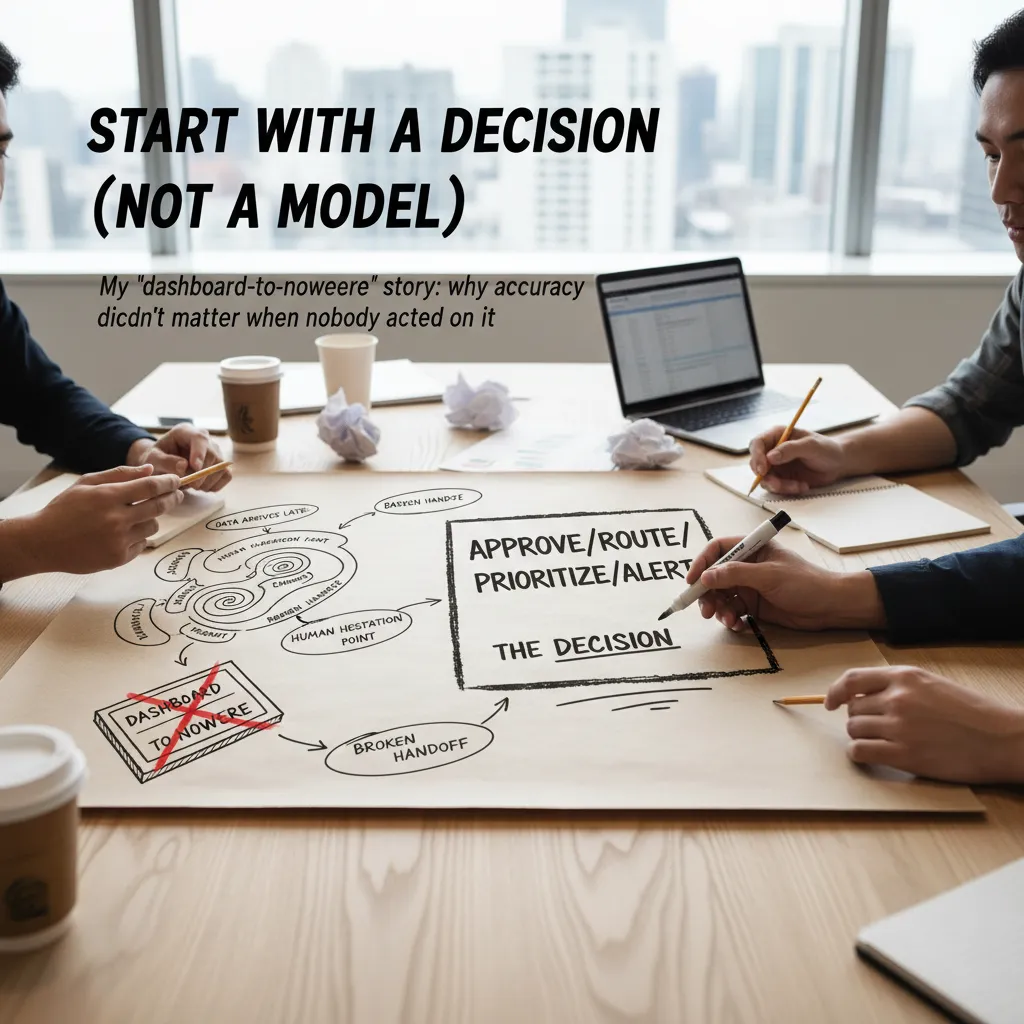

The first time I tried to “add AI” to a perfectly fine analytics pipeline, I did what lots of teams do: I grabbed a shiny model, wired it to a dashboard, and waited for magic. What I got instead was a late-night Slack thread about drifting metrics, angry stakeholders, and an uncomfortable question: “So… what decision is this actually making?” This outline is the playbook I wish I had then—practical steps, a few scars, and a clear-eyed look at what’s changing fast (hello, copilots and the EU AI Act).

1) Start with a Decision (Not a Model)

I once built what I now call a dashboard-to-nowhere. The model scored leads with great accuracy, the charts were clean, and the weekly email looked impressive. But nothing changed. Sales still worked the same accounts, managers still trusted gut feel, and the “AI” lived in a tab nobody opened. That’s when I learned a hard lesson: accuracy doesn’t matter if nobody acts on the output.

Define the decision you’re improving

Before I touch features or algorithms, I write the decision in plain words. Not “predict churn,” but:

- Approve this refund or send it to review

- Route this ticket to the right team

- Prioritize these accounts for outreach today

- Alert an analyst when a metric crosses a threshold

If I can’t say it in one sentence, the use case is still fuzzy.

Map the workflow (where reality breaks)

In any AI implementation in data science, the workflow is the product. I map the steps from data arrival to human action and look for friction:

- Where do people hesitate or ask for a second opinion?

- Where does data arrive late (or missing) and force guesses?

- Where do handoffs break between teams or tools?

This is usually where the “AI” should plug in—not in a separate dashboard.

Pick a use case ladder (don’t jump to the top)

- Descriptive: what happened?

- Predictive: what will happen?

- Prescriptive: what should we do?

- Decision intelligence: the recommendation is embedded in the system people already use

Back-of-napkin sanity check

| Scenario | Cost | Example |

|---|---|---|

| Wrong decision | High/Medium/Low | False fraud alert blocks a good customer |

| Do nothing | High/Medium/Low | Fraud slips through for another week |

If “do nothing” is cheap, I keep it descriptive. If “wrong” is expensive, I design for human review.

2) Audit Your Data Like a Slightly Paranoid Detective

Before I let any AI model touch my data, I do what I call a data walk. It’s simple: I pick one real record and follow it end-to-end—source → warehouse/lakehouse → feature → prediction → action. This sounds slow, but it’s the fastest way I know to spot hidden breaks that dashboards never show.

Do a “data walk” (one record, full journey)

- Source: Where did the record come from (app event, CRM, sensor)? Who owns it?

- Warehouse/Lakehouse: Did it land on time? Did any fields change type or meaning?

- Feature: How is it transformed (joins, aggregations, windows)?

- Prediction: Which model version used it, and what inputs were actually passed?

- Action: What happened next (email sent, fraud blocked, price changed)?

Pick your architecture vibe (and be honest about constraints)

If I’m building on a cloud-native data platform, I can lean on managed storage, scalable compute, and shared governance. If I’m dealing with local/edge constraints (factories, hospitals, retail stores), I plan for limited bandwidth, offline windows, and stricter access controls. The “best” setup is the one that matches how data really moves.

Data mesh: ownership helps… until it doesn’t

I like the idea of data mesh: domains own their data products and publish them with clear contracts. It works when teams agree on naming, quality checks, and SLAs. It becomes chaos when every domain invents its own definitions of “customer,” “active,” or “revenue.” I push for shared standards and a small central team to enforce them.

Synthetic data for safe pipeline testing

In regulated industries, I often use synthetic data generation to test pipelines without exposing real people. It lets me validate joins, feature logic, and model scoring safely.

Track “quiet failures” that wreck models

- Missing joins that silently drop rows

- Delayed batches that shift labels

- Duplicate customer IDs that inflate counts

- Schema drift that turns numbers into strings

My rule: if a failure can happen quietly, assume it already has.

3) Choose Your AI Building Blocks (Copilot, Model, or Agent?)

Autonomous AI analytics copilots: when natural language helps (and when it hurts)

I like analytics copilots because they let me ask, “What changed last week?” instead of clicking through five dashboards. For quick exploration, summaries, and writing SQL, natural language can replace dashboards.

But I avoid copilots for anything that needs repeatable, audited reporting. If a metric drives bonuses, compliance, or customer billing, I keep a fixed dashboard and a tested pipeline. Copilots can misread filters, invent explanations, or hide assumptions.

Model strategy: open-source vs domain-specific vs managed APIs

When I implement AI in data science, I pick the simplest model option that meets the need:

- Open-source models: best when I need control, on-prem deployment, or custom fine-tuning. Trade-off: more ops work.

- Domain-specific models: great for tasks like medical text, legal docs, or code. They often beat general models with less prompt work.

- Managed APIs: fastest path to value. Trade-off: cost, vendor limits, and data governance constraints.

Generative AI APIs deployment: my practical checklist

Before I ship anything using a generative AI API, I run this checklist:

- Latency: can the user wait, or do I need caching/streaming?

- Cost: estimate tokens per request and set hard budgets/quotas.

- Evals: define success with test prompts, golden answers, and regression checks.

- Data leakage: remove secrets/PII, use redaction, and confirm retention policies.

Smaller models at the edge: distillation and quantization

One underrated move is pushing smaller models closer to where data lives. With distillation (teach a small model from a big one) and quantization (use lower precision), I can cut cost and latency while keeping “good enough” quality for many tasks.

My rule is opinionated: start boring (logistic regression), then earn your way to transformers.

If a baseline like logistic_regression solves the problem, I ship it. If it fails, I upgrade with evidence, not hype.

4) Build the Guardrails: Privacy, Governance, and “Future Me” Maintenance

When I implement AI in data science, I treat guardrails as part of the build, not paperwork I “handle later.” If the model touches customer, employee, or health data, I assume I will be asked: How did you protect it, how do you explain it, and how will you keep it safe over time?

Privacy-enhancing technologies (PETs): match the tool to the risk

PETs help me reduce exposure without killing usefulness. I pick based on data sensitivity and where the data lives:

- Federated learning: train across devices or sites so raw data stays local.

- Differential privacy: add controlled noise so outputs don’t reveal individuals.

- Secure enclaves: isolate sensitive processing in protected hardware.

I don’t use all three by default. I choose the smallest change that meets the risk level and still supports the business goal.

Regulation-ready AI analytics: design for compliance early

I treat EU AI Act readiness as a design input. That means I document the intended use, users, and impact up front, and I keep evidence as I go (data sources, tests, approvals). It’s much easier than trying to “retrofit” compliance after launch.

Governance and explainability that real people can read

My baseline governance stack is simple:

- Model cards with purpose, training data summary, metrics, and known limits.

- Data lineage so I can trace features back to systems and owners.

- Plain-language limitations (what it can’t do, where it fails, who shouldn’t use it).

Monitoring like I mean it: drift, bias, and silent failure

I set alerts for data drift, performance drops, and bias signals across key groups. I also watch for “silent failure,” like pipelines that keep running but stop updating correctly. A tiny check like row_count or null_rate thresholds can save weeks.

My tiny but mighty habit

Before launch, I write a one-paragraph memo: “How this can go wrong.” I list the top failure modes, who gets harmed, and what I will monitor.

5) Go Real-Time (Only If It Hurts Not To)

I only push for real-time AI when waiting for the next batch run actually costs money or creates risk. If your business can act tomorrow, don’t build a streaming system today. But when seconds matter—fraud approvals, logistics reroutes, manufacturing line stops, or retail stockouts—real-time analytics detection becomes worth the effort.

When seconds matter (and when they don’t)

- Fraud: block or step-up verify before the transaction clears.

- Logistics: detect delays early and reassign drivers or routes.

- Manufacturing: catch sensor drift before it becomes scrap.

- Retail: spot demand spikes and adjust replenishment fast.

Anomaly detection you can trust

Real-time anomaly detection AI models fail most often because alerts are noisy. I design alerts so humans can trust them:

- Define “actionable”: an alert must map to a clear next step.

- Use thresholds with context: compare to seasonality, location, and recent history.

- Explain the signal: show top drivers (even simple ones) and confidence.

- Rate-limit: group similar alerts and suppress repeats.

Streaming architecture sketch

My mental model is simple:

events → features → model → action loop

In practice, that means events from apps/sensors, a feature layer that stays consistent with training, a low-latency model service, and an action loop that writes outcomes back for monitoring and retraining.

event_in → feature_transform → score() → alert/auto_action → log_outcome

Automated responses (and pager fatigue)

Yes, automated responses within seconds are the promise. The risk is pager fatigue: too many “urgent” alerts train people to ignore the system. I start with human-in-the-loop, then automate only the safest actions (like adding a review step, not canceling orders).

The wild card: the overnight promotion

One time, an “overnight promotion” launched without warning and broke our demand model—suddenly every store looked like an anomaly. Ask me how I know. Now I add a simple safeguard: detect campaign flags, price changes, and traffic spikes, and switch the model into a “promotion mode” before it wakes everyone up.

6) Operationalize: MLOps, AI Factories, and the Boring Stuff That Wins

Build an “AI factory,” not a one-off model

When I implement AI in data science, I try to stop thinking in terms of “a model” and start thinking in terms of an AI factory. That means an infrastructure that makes shipping models repeatable: platform + methods + data + algorithms. In practice, it’s versioned datasets, reusable feature pipelines, standard evaluation, and a clear path from notebook to production. The goal is boring consistency, not hero work.

Make AI infrastructure efficient (before finance calls)

AI infrastructure can get expensive fast. I’ve seen “small experiments” become “please don’t 10x our bill” moments. So I treat cost as a first-class metric:

- Cost controls: budgets, alerts, and per-project quotas.

- GPU scheduling: shared pools, job queues, and auto-shutdown for idle runs.

- Right-sizing: smaller models, mixed precision, and caching where it helps.

Release in thin slices: shadow, canary, rollback

Operationalizing AI means reducing risk. I prefer thin-slice releases:

- Shadow mode: run the model in parallel, but don’t let it affect users.

- Canary: expose a small percentage of traffic and watch metrics closely.

- Rollback plans: define the switch-back steps before launch.

I also log inputs/outputs so we can debug drift, bias, and edge cases later.

Distributed AI networks and linked “superfactories”

Compute placement is getting strategic. Some workloads belong near the data (latency, privacy, cost), while others fit centralized “superfactories” with shared GPUs and strong governance. I plan for a distributed AI network where models, data contracts, and monitoring are consistent across teams—even if the compute runs in different places.

Close the loop with kind post-launch reviews

After launch, I schedule a review where we admit what we misunderstood—kindly. We ask: What failed in the data? What did users do that we didn’t expect? What alerts were missing? That feedback becomes the next iteration of the AI factory.

Conclusion: My “Five Trends in AI” Reality Check for 2026

When I look back at this step-by-step guide to implement AI in data science, I see a simple pattern: the teams that win are not chasing models, they are building repeatable decisions. That is why my 2026 reality check starts with the basics—clear problem framing, clean data pipelines, careful evaluation, and ongoing monitoring—then connects those steps to what I see in AI data analytics trends.

Copilots will keep spreading, but the best ones will be tied to your workflow: drafting SQL, suggesting features, writing tests, and documenting assumptions. Data mesh will matter because AI projects fail when data ownership is fuzzy; domain teams need shared standards so models can be trained and trusted. PETs (privacy-enhancing technologies) will move from “nice idea” to “must-have” as more AI in data science touches sensitive data. Real-time anomaly detection will grow because businesses want fast signals, not monthly reports. And AI factories—the boring, valuable machinery of templates, CI/CD, model registries, and governance—will be the difference between one-off demos and production AI.

What’s next in AI, in my view, is fewer flashy demos and more embedded decision intelligence: models that quietly improve pricing, routing, fraud checks, and support triage inside the tools people already use. That shift rewards teams who treat implementation as a lifecycle, not a launch.

I do want to add a gentle warning about the current agentic hype cycle. Agents are exciting, but they can also create hidden risk: unclear goals, tool misuse, and hard-to-debug failures. I’m still experimenting because the upside is real, but I’m doing it with tight scopes and strong guardrails.

My mini action plan is simple: pick one decision, start with one dataset, add one guardrail, set up one monitor, and run one retro to learn what broke and what worked. If you want to stay “anti-hype,” write your own checklist. I’ll share mine:

Does this model improve a decision, can we measure it, can we explain failures, and can we keep it safe over time?

TL;DR: Pick one decision to improve, not a model to deploy. Build a data foundation (often cloud-native), design for privacy and governance, ship in thin slices with monitoring, and use copilots/agents where they actually reduce work. Align with 2026 trends: copilots, data mesh, PETs, real-time anomaly detection, and AI factories.