I remember sipping bad conference coffee while a panelist joked that AI news now moves faster than my email inbox. In April, that felt truer than ever. Between a major Microsoft–OpenAI recapitalization, product pushes across Microsoft and Google, and OpenAI’s pivot toward audio, I kept a running list on my phone. This post is that list — narrated in first person, with a few opinions, one small tangent about screenless interfaces I can’t help but make, and practical notes if you care about governance, IP, or where corporate AI is headed.

Microsoft OpenAI recap: governance, stake and exclusivity (Microsoft OpenAI)

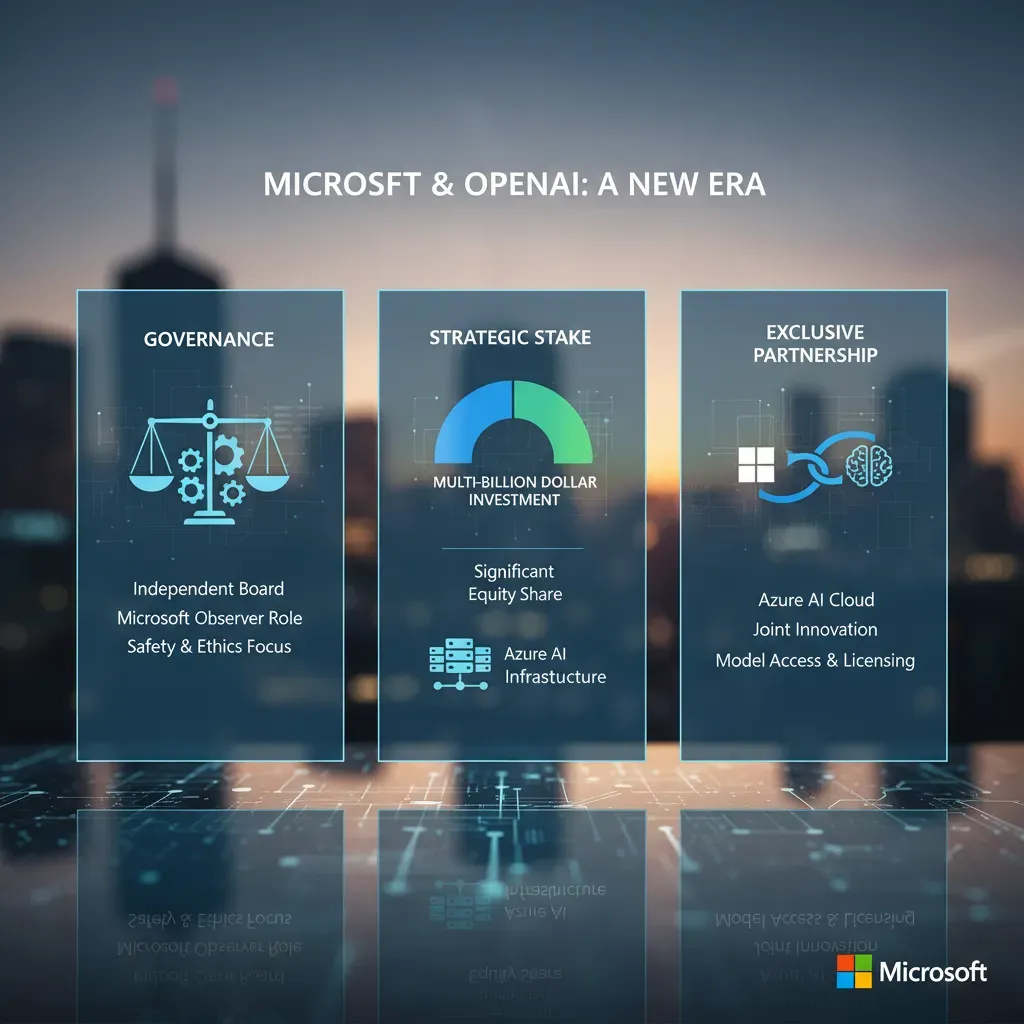

When I look at the Microsoft OpenAI relationship this month, I start with the big financial and governance picture. After OpenAI’s recapitalization, Microsoft holds a 27% stake in OpenAI Group PBC, with the company valued around $135 billion. For anyone tracking AI as a business, that number matters because it frames how much Microsoft can gain (or lose) as OpenAI’s models move into more products and more markets.

Azure exclusivity: the infrastructure tether

On the commercial side, the key point is that Azure remains the exclusive frontier model partner. In simple terms, Microsoft’s cloud is still the main place where OpenAI’s most advanced models are trained and served at scale. There is also API exclusivity tied to this setup, which keeps Microsoft closely connected to how developers access OpenAI capabilities through enterprise channels.

- Why it matters: exclusivity can drive Azure usage, enterprise deals, and product integration.

- What to watch: how long these terms hold as model capability and regulation evolve.

IP rights extended through 2032

Another major update is that IP rights were extended to Microsoft through 2032. This includes rights that can apply even to post-AGI models, but with safety guardrails attached. I see this as a big deal for long-term planning: it supports Microsoft’s ability to keep building AI features into Windows, Copilot experiences, developer tools, and Azure services without constantly renegotiating core access.

| Topic | What changed |

| Stake | Microsoft at 27% of OpenAI Group PBC |

| Exclusivity | Azure is the exclusive frontier model partner + API exclusivity |

| IP timeline | Rights extended through 2032 |

AGI declaration: a formal verification step

Finally, there’s a clearer process for an AGI declaration. If OpenAI claims AGI, an independent expert panel will verify it. That matters because an AGI trigger changes how exclusivity and rights evolve—so this isn’t just technical; it’s governance that directly affects AI access and control.

Microsoft product push: Microsoft 365 Copilot, WorkIQ system and Agent 365 (Microsoft 365)

This month, Microsoft’s AI story felt less like “one new model” and more like a full enterprise product push. In my day-to-day work, that matters because most teams don’t just need smarter chat—they need control, visibility, and repeatable workflows inside Microsoft 365.

My “between meetings” demo of Agent 365

I tested an early demo of Agent 365 (in my imagination between meetings), and the promise is clear: centralized agent management plus observability for enterprises. I picture a single place where IT and security teams can see what agents exist, what data they touch, and how they perform—without chasing settings across multiple tools.

WorkIQ, Agent 365, and App Builder: built for corporate AI

Microsoft also launched WorkIQ, Agent 365, and App Builder aimed at corporate productivity and agent orchestration. To me, this signals a focus on the “plumbing” that companies actually need: routing tasks, connecting to business apps, and keeping agents consistent across departments.

- WorkIQ system: positioned around enterprise workflow intelligence and inference pathways (including WorkIQ inference).

- Agent 365: a management layer for deploying and monitoring multiple agents.

- App Builder: a faster way to package internal tools and agent-powered apps for Microsoft 365 users.

A standards play: Model Context Protocol (MCP)

There’s also a practical standards move: Microsoft partnered with Anthropic on the Model Context Protocol to improve agent-to-agent communication. For enterprise deployments, this is the unglamorous but important part—agents need a shared way to pass context, tools, and permissions safely.

In plain terms: better “hand-offs” between agents means fewer broken workflows and less custom glue code.

Why Azure exclusivity still matters

This product push ties back to Azure exclusivity. If a company builds on Microsoft’s stack, it gets integrated agent tooling and inference pathways (like WorkIQ inference) that can reduce setup time and simplify governance inside Microsoft 365 Copilot environments.

OpenAI’s bets: audio focus, IPO whispers and PBC status (OpenAI audio)

Why I’m thinking “podcast-first” AI

I keep picturing a podcast-forward future. OpenAI’s push into audio feels like a deliberate move toward screenless experiences, where AI fits into daily life without demanding my full attention. Instead of reading long replies, I can imagine listening to summaries, asking follow-up questions, and getting real-time help while I’m walking, cooking, or commuting.

The “war on screens” and where AI shows up next

Reports suggest OpenAI is betting big on audio capabilities amid a wider Silicon Valley “war on screens.” If that trend holds, it could reshape where AI models are used—less inside a browser tab, and more inside devices that already live around us.

- Phones: faster voice interactions for search, planning, and quick explanations

- Cars: hands-free help for navigation, messages, and trip context

- Speakers & earbuds: always-available Q&A, reminders, and learning on the go

For me, the key AI idea here is simple: audio makes the model feel like a companion, not a tool I have to “open.”

IPO chatter: big numbers, long timeline

There’s also IPO chatter. Some reports claim OpenAI is planning a potential IPO, with valuation talk reaching near $1 trillion, and possible filings expected in H2 2026. I treat this as “watch, don’t assume,” but it matters because an IPO path can change priorities—more pressure for predictable revenue, clearer product lines, and tighter messaging around risk.

PBC status, board structure, and why governance matters

OpenAI’s public benefit corporation (PBC) status and board structure are part of the governance story. In plain terms, it affects how OpenAI explains commercial deals, how it balances profit with public benefit, and how it rationalizes decisions around IP, partnerships, and safety commitments.

Audio growth, IPO rumors, and governance choices all point to the same question: where does OpenAI want AI to live, and who is it ultimately accountable to?

Competitive landscape & predictions: Gemini, market share and AI headed 2026 (AI headed 2026)

Gemini’s biggest advantage: distribution

I’m watching Google’s Gemini integration into Search and Android as the biggest consumer-facing push that could chip away at OpenAI’s user base in 2026. When AI is placed directly inside the tools people already open every day—Search, Chrome, Android, and Google apps—usage can grow without users “choosing” a new product. That kind of default access is hard to match, even if competing models are strong.

What analysts are really saying about market share

Analysts expect Gemini to gain consumer share via deep integration. To me, that doesn’t mean OpenAI disappears—it means consumer traffic and platform leverage get reshuffled. If Google answers more questions inside Search with Gemini, fewer people may click out to standalone chat apps. That changes who owns the user relationship, the data signals, and the ad or subscription funnel.

Deep integration rarely “wins” because it’s better; it often wins because it’s already there.

Microsoft’s enterprise moat vs. consumer churn

Microsoft’s corporate bet may insulate enterprise revenue even if consumer attention shifts. I see a possible bifurcation forming:

- Consumer AI: fast switching, driven by defaults (Search/OS), price, and convenience.

- Corporate AI: slower switching, driven by compliance, procurement, and integration with Microsoft 365, Azure, and security tooling.

In other words, consumer model competition can stay noisy while the corporate AI market consolidates around a few trusted platforms.

AI headed 2026: capability up, impact slower

My macro prediction is that model capability will keep accelerating, but many forecasts still point to modest near-term economic impacts by 2026. Adoption and measurable productivity gains often lag behind capability because companies need training, process changes, and governance.

| Trend | What I expect by 2026 |

| Model quality | Better reasoning, multimodal, lower cost per task |

| Consumer share | More split across ecosystems (Google/Apple/Microsoft/OpenAI) |

| Business impact | Real, but uneven; strongest in support, coding, and content ops |

IP, revenue share and AGI safety: legal strings attached (IP rights)

When I read the latest details around OpenAI and Microsoft, I like to think of the IP clauses as strings that tie research, compute, and profit together. Those strings matter because they shape what “AI innovation” looks like in real products, not just in labs. A key part of that knot is Microsoft’s extended IP rights through 2032, which helps explain why the partnership still looks so tightly linked even as the broader AI market gets more competitive.

API exclusivity vs. non-API flexibility

One nuance I keep coming back to is how the agreement draws a line between API products and non-API products. OpenAI can still develop API-based products with third parties, but the API exclusivity stays with Azure. At the same time, non-API products can run on other clouds. That sounds simple, but it creates a practical boundary: if you’re building a service that depends on an API distribution model, Azure is the default lane; if you’re packaging something differently, you may have more hosting options.

AGI safety and long-term competition

Another clause that stands out is the idea that Microsoft can pursue AGI independently using OpenAI IP, as long as it follows larger compute thresholds. I see this as both a safety and competition lever. It sets conditions around how far and how fast certain work can scale, while also leaving room for Microsoft to keep moving even if the partnership dynamics change over time.

The “matrix” builders must navigate

For teams building consumer or enterprise AI, the real challenge is that revenue share, third-party product rules, and IP rights don’t operate separately—they interact.

- Revenue share influences pricing and margins for AI features.

- Third-party rules shape who can package, resell, or embed models.

- IP rights determine what can be reused, where it can run, and for how long.

To me, this is the legal layer of AI: it quietly decides which products ship, which clouds win workloads, and how profits get split.

Human impact, economics and the audio shift (Corporate productivity)

Voice-first AI feels like the most human interface

I often imagine my grandparents using AI over voice in a simple kitchen device: asking for a recipe swap, a medication reminder, or help reading a label. That’s why OpenAI’s growing focus on audio matters to me. It pushes AI toward a human-first path where you don’t need perfect typing, app switching, or even a screen. In day-to-day work, the same shift can reduce friction—quick spoken summaries, meeting notes, and “do this next” prompts that feel more natural than another dashboard.

Big capability, slower economics

Even with rapid progress in AI models, many analysts still expect modest macroeconomic impact by 2026. I think that’s realistic. Adoption takes time, and the hard parts are not the demos—they’re the integration costs, data cleanup, security reviews, and regulatory friction. In other words, AI can be ready before organizations are ready.

- Adoption lag: teams need training and new workflows

- Integration costs: connecting AI to real systems is expensive

- Compliance: privacy and industry rules slow rollouts

Corporate productivity: ROI beats feature lists

On the corporate side, productivity gains look promising through Microsoft 365 Copilot and newer agent tooling. I’m watching one thing more than any feature announcement: measurable ROI at scale. It’s easy to save five minutes in a pilot; it’s harder to prove sustained gains across thousands of employees without creating new risks or extra review work.

WorkIQ inference and the quiet power of observability

Tools like WorkIQ inference and agent observability can improve operations by showing what agents did, why they did it, and where they fail. I’ll flag that observability is often the unsung hero of reliable agent deployment—without it, “AI productivity” can turn into hidden costs.

“If you can’t measure it, you can’t manage it”—and that’s especially true for AI agents.

Wild card & thought experiment: AGI, timelines and a short vignette (AI predictions)

When I read the latest AI updates from OpenAI, Google, and Microsoft, I keep coming back to one wild card: what if AGI is officially declared in 2027 by an expert panel? Not “AGI vibes,” but a formal label that triggers contracts, governance rules, and market reactions. If that happens, I expect exclusivity deals and revenue-share terms to suddenly matter more than raw model quality. A partner that once paid for priority access might find the incentives flipped overnight: the safest move could become spreading risk across vendors, or locking down distribution before new restrictions kick in.

A short vignette: Agent 365 meets the real world

Imagine a small startup that runs lean: five humans, and “Agent 365,” a Microsoft-style agent stack that schedules meetings, drafts proposals, handles support tickets, and even negotiates ad buys. The team is remote, the margins are thin, and the AI does the heavy lifting. Then a big customer asks for a cloud switch for compliance reasons. The startup tries to move from one provider to another, assuming it’s like changing email hosts.

That’s when the trouble starts. Their prompts, tool integrations, and fine-tuned workflows are tied to one ecosystem. Worse, the contract language around IP ownership and “derived artifacts” is fuzzy. The startup learns that portability is not just technical; it’s legal. They spend weeks rewriting automations, re-approving data flows, and proving that confidential customer data never trained anything it shouldn’t. The customer pauses the deal.

The plumbing analogy (contracts decide where value flows)

I think of model contracts like the plumbing in a house: you don’t notice them until water backs up. These legal pipes determine where value flows—who owns outputs, who can reuse data, and who gets paid when AI work scales.

My practical takeaway as I close this April AI roundup: keep an eye on IPO timing, IP clauses, and cross-platform portability. In the next phase of AI, those knobs—not just benchmarks—may decide the winners and losers.

TL;DR: Microsoft’s deeper OpenAI tie-up reshapes governance and IP; Microsoft rolls out corporate AI tools (WorkIQ, Agent 365); OpenAI bets on audio and eyes a 2026 IPO; Google’s Gemini threatens consumer share. Expect steady technical advances, guarded AGI rules, and modest near-term economic shifts.