Last spring I watched a “simple” campaign launch turn into a five-tab spreadsheet argument—again. The weird part: our creative was solid; our audience was right; but ops was the bottleneck. I finally let AI touch the unglamorous parts (naming conventions, UTM hygiene, creative versions, budget pacing), and the work got… quieter. Fewer panic pings. Faster decisions. The kind of calm that usually means something is either fixed—or about to fail spectacularly. Here’s what changed, what didn’t, and the real results I’d bet on as Tech Trends 2026 accelerates.

The day I stopped “shipping” and started operating (Marketing Trends 2026)

I used to think my job was to ship campaigns. That meant late-night launch checklists, Slack pings at 11:47 PM, and a “we’ll fix it tomorrow” mindset. My calendar was a loop of handoffs: creative to web, web to analytics, analytics to paid, paid back to me. The work got done, but it never felt stable.

My before/after moment came when I started using AI inside marketing operations, not just for copy. Instead of me being the human router, AI became the handoff layer. I stopped “shipping” like every launch was a one-time event and started operating like we were running a system.

Where AI helped first: the unglamorous parts

Based on what I saw in How AI Transformed Marketing Operations: Real Results, the fastest wins weren’t flashy. They were the boring steps that used to break at scale.

- Briefs: AI turned messy notes into a clean brief with goals, audience, channels, and constraints. It also flagged missing inputs (like “no offer defined”).

- QA: It checked landing pages for broken links, missing UTMs, inconsistent headlines, and form errors before I ever clicked “publish.”

- Tagging: It suggested a naming pattern and applied it across campaigns so reporting didn’t become a guessing game.

- Status reporting: It summarized what shipped, what was blocked, and what needed approval—without me writing a novel in Slack.

Why I treat ops like a product now

The shift was simple: I stopped treating ops as a pile of tasks and started treating it like a product with users (my team), a roadmap (what we automate next), and quality standards (what “done” means). If a process only works when I’m online, it’s not a process—it’s a dependency.

“If it can’t be handed off cleanly, it isn’t ready to scale.”

Wild-card aside: the campaign that improved after I deleted half the dashboard widgets

I once had a dashboard with everything: CTR, CPC, ROAS, scroll depth, heatmaps, time on page—dozens of widgets. AI kept summarizing the same truth: we were drowning in signals. I deleted half the widgets and kept a short list: pipeline impact, CAC trend, conversion rate, and creative fatigue. The campaign improved because decisions got faster, and we argued less about numbers that didn’t change actions.

ROI critical marketing: the scoreboard I trust (and the one I ignore)

In 2026, I treat AI marketing like any other ops system: if it doesn’t move the business, it’s noise. After seeing how AI transformed marketing operations in real teams, I stopped chasing “AI wins” and started tracking AI marketing ROI the same way finance does—through revenue, margin, and throughput.

What I measure now (the scoreboard I trust)

My core scoreboard is simple: did AI help us earn more, keep more, or ship more work with the same team? That means I tie AI projects to:

- Revenue impact: pipeline created, qualified opportunities, and closed-won revenue influenced by AI-assisted campaigns.

- Margin impact: lower cost per qualified lead, reduced agency spend, fewer hours per asset, and less rework.

- Operational throughput: cycle time from brief → launch, number of experiments shipped per month, and time-to-update across channels.

When AI is working, I see faster production without quality drops, cleaner handoffs, and fewer “fire drills.” Those are operational wins that compound.

The metric trap: vanity lift vs sustained economic impact

I still look at CTR, engagement, and “lift,” but I don’t let them lead. A short-term spike can hide long-term damage: messy data, brand risk, or a workflow that breaks at scale. I’ve learned to ask: is this lift durable, or just a temporary bump?

“If the metric can go up while the business gets worse, it’s not a north star.”

My mini-framework: impact × repeatability × risk

Before I call an AI initiative a win, I score it with a quick framework:

- Impact: Does it move revenue, margin, or throughput in a measurable way?

- Repeatability: Can we run it weekly with the same quality, or is it a one-off hero effort?

- Risk: What’s the downside—compliance, brand tone, hallucinations, data leakage, or team overload?

If impact is high but repeatability is low, it’s a prototype, not an ops win. If risk is high, I require tighter review gates and clearer ownership.

Tiny confession (the CTR win that cost us a month)

I once celebrated an AI-written ad set that boosted CTR fast. Then the cleanup hit: mismatched landing page promises, confused lead quality, and a reporting mess because tracking wasn’t standardized. We spent a month fixing workflows and re-tagging data. The lesson stuck: real ROI includes the cost of cleanup, not just the first-week chart.

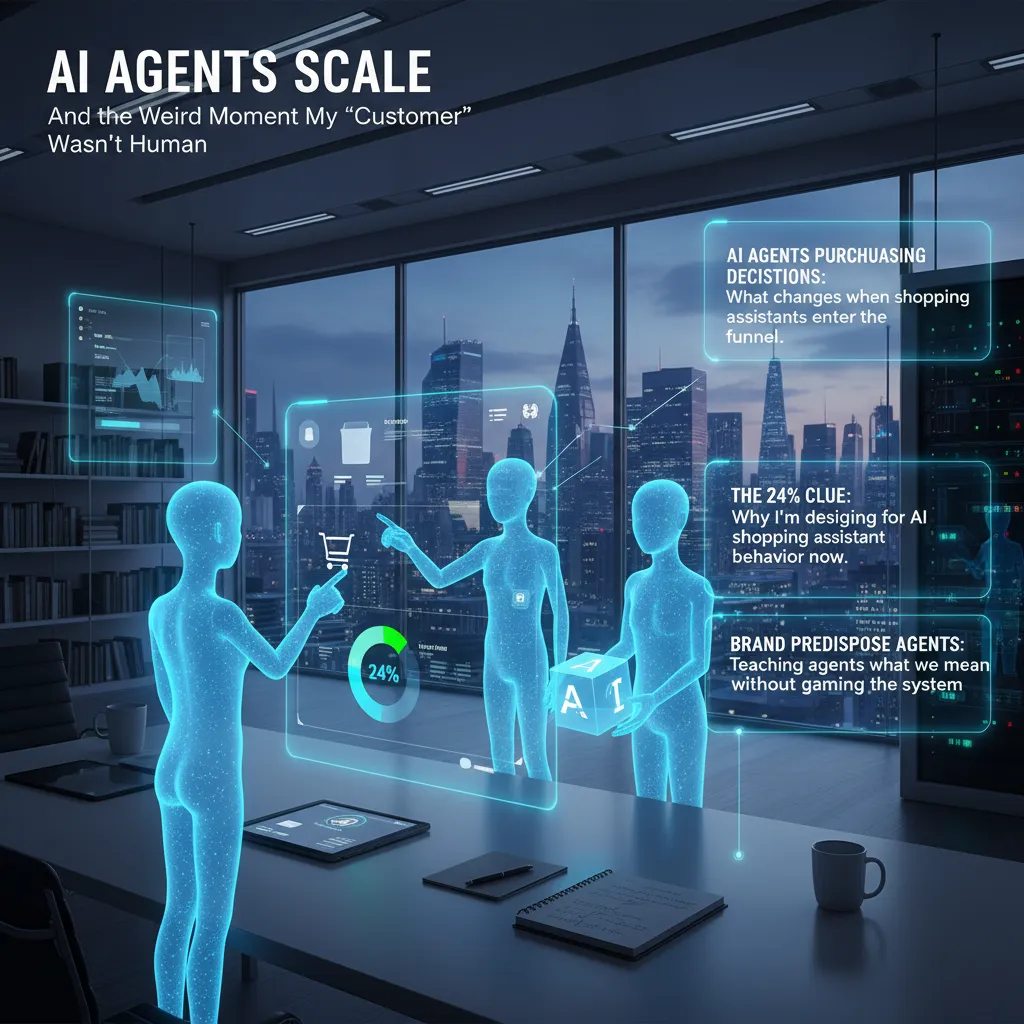

AI agents scale (and the weird moment my “customer” wasn’t human)

I first noticed the shift when a “customer” asked questions that sounded like a checklist: shipping thresholds, return windows, warranty terms, and price-match rules—rapid-fire, perfectly structured. It didn’t feel like a person browsing. It felt like an agent gathering facts to make a decision on someone else’s behalf.

AI agents and purchasing decisions: what changes when shopping assistants enter the funnel

When an AI shopping assistant enters the funnel, the buyer journey gets shorter and more strict. The agent is not “inspired” by a hero banner. It is trying to reduce risk and effort. That changes what wins:

- Clarity beats cleverness: policies, pricing, and product specs need to be easy to extract.

- Consistency beats variety: the same claim must match across PDPs, FAQs, and support docs.

- Proof beats promises: reviews, certifications, and clear terms carry more weight than slogans.

The 24% clue: why I’m designing for AI shopping assistant behavior now

In the source material (“How AI Transformed Marketing Operations: Real Results”), one metric stuck with me: a 24% lift tied to operational improvements—faster execution, fewer handoffs, and cleaner data flows. I treat that as a clue. If ops gains can move results that much, then designing for agent behavior (which is basically “ops for buying”) can move results too.

So I’m updating content like it’s going to be read by both humans and machines: tighter product data, cleaner policy pages, and fewer “contact us for details” dead ends.

Brand predispose agents: teaching agents what we mean without gaming the system

I’m not trying to trick agents. I’m trying to make meaning unambiguous. That means:

- Using plain language definitions (e.g., what “free returns” really includes).

- Keeping one source of truth for policies and linking to it everywhere.

- Publishing structured details in FAQs and help docs so agents can quote them accurately.

My goal is simple: when an agent summarizes our offer, it should sound like us—and still be true.

A hypothetical scenario: an agent negotiates your return policy faster than your support team

Imagine this: a customer’s agent detects a sizing issue and opens a return request. It compares our policy to two competitors, then sends a message like:

Customer requests prepaid label + 45-day window. Competitor median: 45 days. Can you match?

If my support team takes 12 hours to respond but the agent expects an answer in minutes, the “experience” is no longer my email tone—it’s my policy speed. That’s the weird moment: my customer isn’t human, and my marketing now includes how well my rules can be understood and executed.

Data connecting collecting: semantic layer agent dreams vs my messy reality

In 2026, it’s easy to believe the fix for marketing ops is more data. That was my belief too. We added new sources, piped in more events, and stored everything “just in case.” The result was not clarity. It was louder confusion. Our dashboards didn’t match, teams argued in meetings, and every report came with a footnote like, “depends how you define lead.” The real shift happened only after we stopped chasing volume and started fixing meaning.

Why “more data” didn’t help until we fixed meaning

From the source material, the biggest ops wins came when we treated data like a product: consistent definitions, shared rules, and fewer “mystery metrics.” We had the same numbers flowing through CRM, ads, web analytics, and email—but each tool labeled them differently. So “MQL” in one place meant “form fill,” and in another it meant “sales accepted.” No AI could save that.

- More rows increased noise.

- More tools increased mismatch.

- More automation increased the speed of wrong answers.

Semantic layer agent: the shared language that kept our dashboards from arguing

Our breakthrough was building a simple semantic layer and letting a semantic layer agent enforce it. Think of it as a translator that sits between raw data and every dashboard, model, and AI assistant. It didn’t “invent insights.” It made sure everyone asked questions using the same dictionary.

“If the definition is stable, the metric becomes trustworthy.”

We documented core terms (lead, MQL, pipeline, revenue) and mapped them to one set of fields. The agent flagged conflicts, suggested merges, and pushed updates to our BI and reporting views.

Orchestrator agents coordination: specialized bots without chaos

Once definitions were stable, we added orchestrator agents to coordinate work. One agent checked data freshness, another validated campaign tagging, and another generated weekly performance notes. The orchestrator handed tasks off in order, so we didn’t get three bots “fixing” the same thing differently.

- Validate inputs (naming, UTMs, IDs)

- Apply semantic rules (definitions, joins)

- Publish outputs (dashboards, alerts, summaries)

Small tangent: I renamed three fields and suddenly everyone trusted the numbers

This sounds silly, but it was real. I renamed:

lead_status→lifecycle_stageconv→primary_conversionrev→booked_revenue

Overnight, fewer questions. Same data, clearer meaning. That’s the messy reality behind “AI marketing trends”: trust starts with language.

Agentic optimization recommendations: my new relationship with real-time campaign testing

In 2026, agentic AI optimization changed how I run campaigns day to day. Instead of me “setting and forgetting,” the model watches performance signals in real time and recommends actions I can approve. The biggest shift is pacing: I no longer push budget increases based on gut feel. I let the agent propose small, frequent moves, then I review the “why” behind each change. That alone reduced wasted spend and made our marketing operations feel calmer and more controlled.

How agentic AI changed pacing, creative rotation, and audience rules

My old workflow was weekly: adjust budgets, swap ads, tweak targeting. Now it’s continuous. The agent flags when spend is too fast for the learning window, when frequency is rising, or when a segment is saturating.

- Pacing: I use guardrails like

max +15% budget/dayunless we hit a clear efficiency threshold. - Creative rotation: The agent rotates ads based on early attention signals (thumb-stop rate, hold time) before conversion data is stable.

- Audience rules: We moved from rigid “include/exclude” lists to dynamic rules (e.g., suppress recent buyers for 14 days, expand lookalikes only when CPA is stable).

Real-time campaign testing: what we test weekly (and what we stopped testing)

Real-time campaign testing works best when the tests are repeatable. Each week, I run a small set of experiments the agent can learn from quickly:

- Weekly tests: first-line hook, offer framing, landing page headline, and one new creative format (UGC vs. product demo).

- We stopped testing: tiny audience splits and micro-bid tweaks. The agent handles those better than I do, and the results were noisy.

When to overrule the model (yes, sometimes you should)

I overrule the model when business context matters more than short-term metrics. Examples: inventory limits, brand safety, legal claims, or a planned promo date. I also pause automation if tracking breaks. My rule is simple: if the data is incomplete, the recommendation is incomplete.

Mini case note: the ad that “lost” for 48 hours and then became the winner

One creative looked terrible for two days—high CPA, low conversion rate. The agent recommended cutting it. I held it at a low cap because comments showed strong intent, and the clicks were coming from a new segment. After 48 hours, the learning stabilized, the audience match improved, and it became our top performer. That moment taught me to treat agentic AI as a fast analyst—not the final decision-maker.

Generative AI authenticity: the line between helpful and hollow (plus Super Bowl math)

In 2026, I’m still bullish on generative AI in marketing—but only when it earns trust. From what I’ve seen in How AI Transformed Marketing Operations: Real Results, the biggest wins came when AI supported the work, not when it tried to be the work. That difference is what separates helpful from hollow, and it’s now a core part of my AI marketing trends 2026 playbook.

Where generative AI marketing helped: production-scale operations

Generative AI shines in marketing operations when the goal is speed, consistency, and volume. I use it to draft first versions of emails, landing page sections, ad variations, and internal briefs—then my team edits with real customer language. This is where AI content creation at production scale actually improves ROI: faster cycles, more tests, and fewer bottlenecks. It also helps with repurposing, like turning one webinar into a blog outline, social captions, and nurture copy without starting from zero.

Where it hurt: creative that felt like it was made for no one

The downside shows up when teams let AI “finish” the creative. I’ve watched campaigns go live with copy that was technically correct but emotionally empty. It didn’t sound like our brand, and it didn’t sound like our customers either. The result wasn’t just lower engagement—it was a quiet loss of trust. If a message feels like it could be for anyone, it lands like it’s for no one.

The 50% Super Bowl prediction (and the math behind the signal)

There’s a growing prediction that 50% of Super Bowl ads will use generative AI in some form. Whether the exact number is right or not, the signal matters. A 30-second Super Bowl spot can cost roughly $7M for media alone. If AI helps a brand create, test, and localize more versions before spending that money, even a small lift is huge. For the rest of us, the lesson is simple: use AI to increase learning speed, not to flood channels with average content.

Creator investment marketing: pairing creators with AI

My conclusion for 2026 is clear: I’m investing more in creators, not less. I pair creators with AI so they can move faster—research, scripting, editing, and versioning—while keeping the human point of view that audiences trust. AI can scale output, but creators scale meaning. That’s where real ROI lives.

TL;DR: AI didn’t replace my team; it replaced our operational chaos. AI agents at scale, better data connecting collecting, and agentic optimization recommendations delivered measurable lift—while generative AI authenticity and governance kept us from shipping nonsense.