Last year, I sat in a board meeting where someone asked, “So… are we *done* with AI yet?” Nobody laughed—because the pilot had eaten six months and we still couldn’t explain the ROI. That moment is why I keep a simple rule on my sticky note: if I can’t connect an AI use case to a real workflow, a named owner, and a risk decision, it’s not a strategy—it’s a science fair. In this guide, I’ll lay out the leadership moves that turn AI from scattered experiments into an AI-native organization: an AI governance framework that people actually follow, a federated governance model that doesn’t bottleneck, and an end-to-end approach from data platform readiness to MLOps security and continuous ROI measurement.

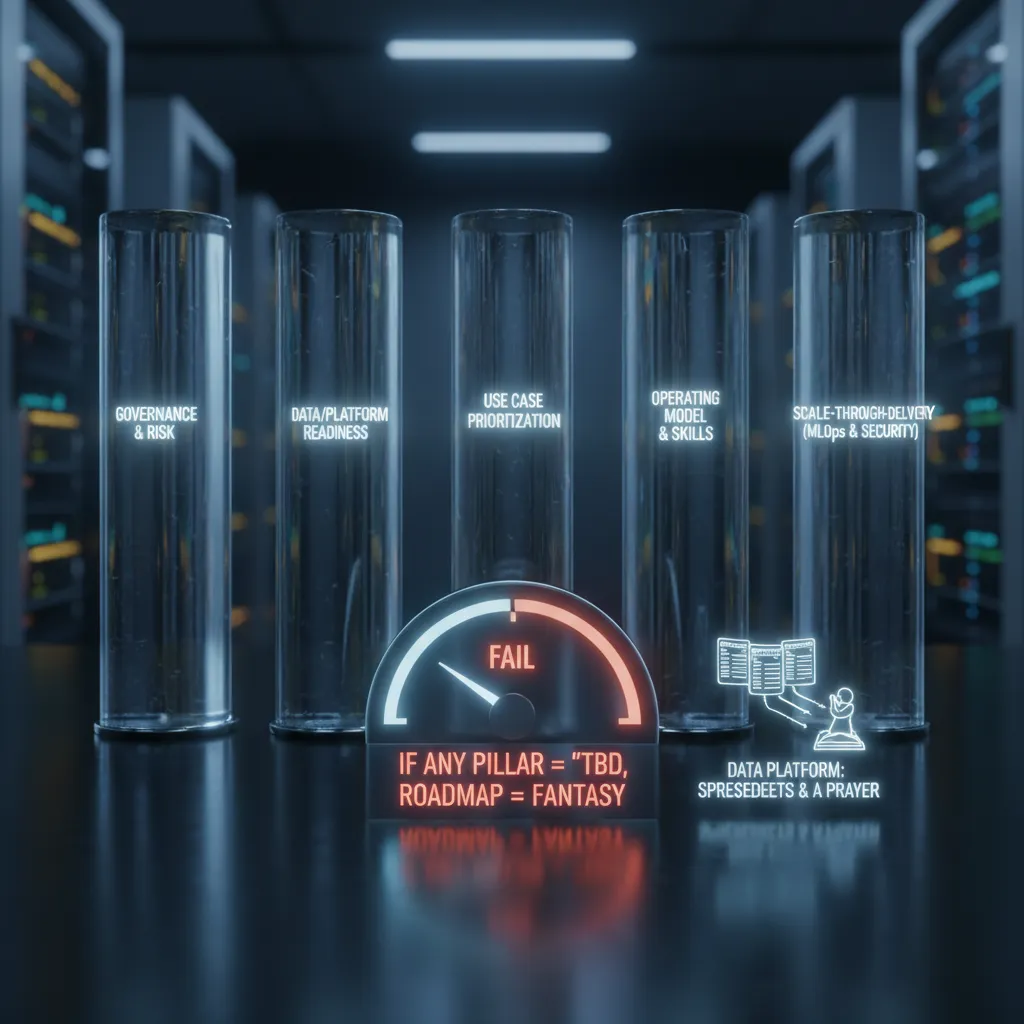

1) My “Five-Pillar” AI Strategy Alignment Test

When I build an AI strategy for 2026, I don’t start with tools or a flashy demo. I start with a simple alignment test built on five pillars. If we can’t speak clearly about each pillar, we don’t have a strategy yet—we have a wish.

The five pillars I check first

- Governance & risk: policies, approvals, privacy, model risk, and clear ownership.

- Data/platform readiness: data quality, access, pipelines, and the platform to run AI safely.

- Use case prioritization: a ranked list tied to business value, not internal hype.

- Operating model skills: roles, training, decision rights, and how teams work together.

- Scale-through-delivery (MLOps security): deployment, monitoring, drift checks, security, and repeatable delivery.

Here’s my quick self-check: if any pillar is “TBD,” the roadmap is fantasy—yes, even if the demo is dazzling. “TBD” usually means hidden work, hidden cost, and hidden risk. In leadership terms, it means you can’t promise outcomes with a straight face.

A quick story from the field

One time, our best model failed in production even though the accuracy looked great in testing. The real issue wasn’t the model. Our data platform readiness was basically three spreadsheets and a prayer. Data definitions changed weekly, access was manual, and nobody could trace what fed the model. The result: broken outputs, lost trust, and a painful reset.

How I tie pillars to business alignment

I treat each pillar as a bridge to strategic business outcomes. Governance protects the brand and reduces risk. Data readiness reduces cycle time and rework. Use case prioritization focuses spend on measurable value. Skills and operating model reduce dependency on a few experts. MLOps security turns pilots into reliable delivery.

For me, the strategy lives in quarterly business outcomes measurement, not in a slide deck.

Wild-card analogy: AI strategy is like city planning—zoning (governance) and utilities (data) matter as much as buildings (use cases). If you skip the basics, the skyline may look nice, but nothing works.

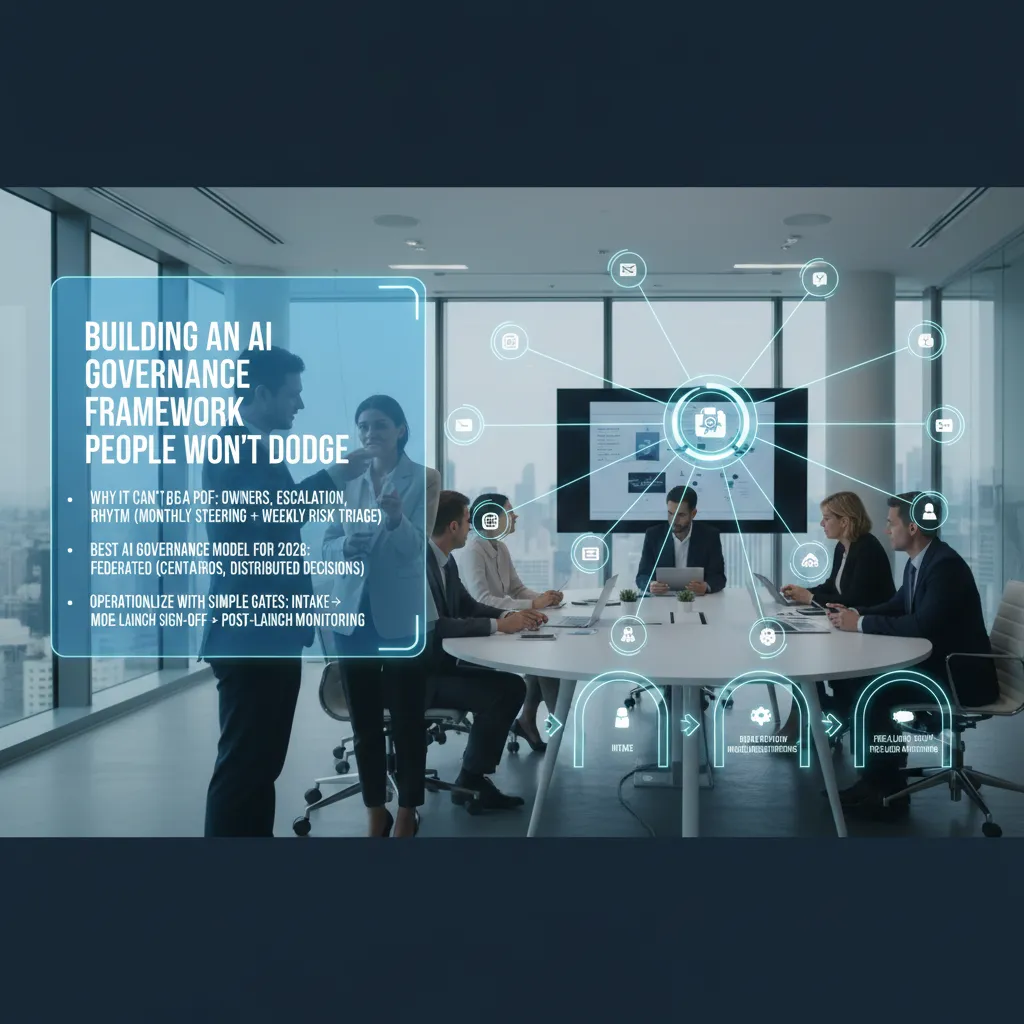

2) Building an AI Governance Framework People Won’t Dodge

When leaders tell me they “have an AI governance framework,” it is often a PDF that no one reads. In 2026, governance has to be operational: clear owners, clear escalation paths, and a steady rhythm. I run it like a product system, not a policy shelf. My default cadence is a monthly AI steering meeting for priorities and standards, plus a weekly risk triage to unblock teams and catch issues early.

Make governance real: owners + escalation + rhythm

If people can’t answer “who decides?” they will route around the process. I assign named owners for each gate, and I publish a simple escalation map so teams know where to go when they hit a wall.

- Owner: accountable person for the decision

- Escalation path: what happens when risk is unclear or deadlines are tight

- Rhythm: steering monthly, triage weekly

The best AI governance model for 2026: federated

The model that works best now is a federated AI governance model: a central team sets standards (security, privacy, model documentation, vendor rules), while decisions stay close to the use case in business units. This keeps speed high without losing control. Central sets the “guardrails,” local teams drive the “car.”

Operationalize responsible AI with simple gates

I keep the workflow short and repeatable. These gates make responsible AI feel like normal delivery:

- Intake: purpose, users, expected impact

- Data review: sources, consent, retention, sensitive fields

- Model risk tiering: low/medium/high based on harm potential

- Pre-launch sign-off: security, legal, and business owner approval

- Post-launch monitoring: drift, bias signals, incidents, user feedback

Define “high-risk automation” and treat it differently

Do not ignore high-risk automations. I require extra scrutiny for hiring, credit, and safety-critical workflows (health, industrial, public safety). These get stronger testing, tighter access controls, and clearer human override rules.

A practical CAIO moment: empowered to say “not yet”

“Not yet” is not “no.” It means we fix the risk fast, then ship with confidence.

A Chief AI Officer only helps if they can pause a launch without killing momentum. I make “not yet” come with a checklist, an owner, and a date—so teams feel protected, not blocked.

3) Use Case Prioritization: The Quarter-By-Quarter ROI Reality Check

In my Leadership AI Strategy Guide for 2026, I treat use case prioritization like a quarterly ROI audit, not a brainstorm. Board conversations have shifted from “Should we use AI?” to “Where does AI deliver ROI this quarter?” So I design the AI backlog like a product portfolio: a few bets, a few safe wins, and clear owners.

My 3-number ranking system

I rank every AI use case with three numbers:

- Value potential: revenue lift, cost savings, cycle-time reduction, or risk reduction.

- Feasibility (data + workflow): do we have usable data, and does the work process allow AI to fit naturally?

- Risk level: privacy, compliance, brand risk, model errors, and change risk.

If risk is high, I demand a tighter measurement plan. That means clearer guardrails, more human review, and stronger monitoring from day one.

Quarter-by-quarter ROI planning

I plan outcomes in 90-day blocks because that’s how leaders fund work. Each quarter, I want a short list of use cases that can prove value fast, plus one longer-term build that compounds. I track ROI with simple metrics tied to the function’s scorecard, not AI vanity metrics.

“If we can’t measure it this quarter, we don’t scale it next quarter.”

Business-led deployment beats “IT builds, business watches”

I’ve learned that adoption is the real bottleneck. Every use case gets a product owner inside the function (Sales, Finance, HR, Ops), not just a technical lead. IT and data teams enable, but the business owns the workflow, training, and daily usage targets.

A small confession (and the lesson)

I once green-lit a “cool” GenAI assistant. It wrote great answers, but nobody used it because it didn’t fit the meeting cadence. The lesson: AI must match how work already happens—or you must change the cadence on purpose.

Quick win pattern: expert-first AI

My fastest wins come from amplifying top performers: draft proposals for the best sellers, summarize cases for the best support leads, or pre-fill analysis for the best analysts. I don’t start by trying to replace experts; I start by multiplying them.

4) Data Platform Readiness (a.k.a. The Unsexy Part That Saves You)

If your data is scattered, your AI will hallucinate—or worse, it’ll be confidently wrong in a way that looks “professional.” In my experience, leaders underestimate how fast a polished chatbot can turn messy data into polished misinformation. Data platform readiness is the part of an AI strategy that feels boring, but it’s the part that keeps you out of trouble.

My readiness checklist (the stuff I insist on)

- Data ownership: Every critical dataset needs a named owner who can approve changes and answer questions.

- Lineage: I want to know where the data came from, how it was transformed, and what systems depend on it.

- Access controls: Clear roles, least-privilege access, and audit logs. “Everyone can see everything” is not a strategy.

- Evaluation datasets: A stable set of test questions, documents, and expected answers so we can measure quality over time.

- Golden records: A boring-but-vital definition of the “single source of truth” for key entities (customer, product, policy, employee).

Watch for infrastructure bottlenecks

Even with good data, execution can stall. I look for three common blockers: GPU queues that slow experiments, slow approvals that turn simple access into a multi-week process, and “who has access” limbo where teams can’t ship because permissions are unclear. If you want speed, you need a clear path from request → approval → access → logging.

Private sovereign AI (when you can’t use a public endpoint)

Sometimes regulation, contracts, or data sensitivity means you can’t just ship everything to a public model endpoint. In those cases, I plan for private sovereign AI: controlled environments, regional hosting, strict data boundaries, and vendor terms that match your risk profile. The goal is still the same—useful AI—just with tighter guardrails.

I’ve never seen a model outperform a messy incentive structure. Data stewardship is a people problem.

5) Operating Model Skills: What I Look For in AI-Ready Leaders

When I assess AI-ready leadership, I don’t start with “Can you explain machine learning?” I start with operating model skills. In practice, leader skills for AI are decision skills: how you ask better questions, how you challenge metrics, and how you set clear guardrails so teams can move fast without breaking trust.

Decision skills beat technical trivia

I look for leaders who can turn vague goals into testable choices. They ask: What decision will this model support? What is the cost of a wrong answer? Who owns the final call? They also push on metrics. If a dashboard shows “accuracy,” they ask what happens to customer outcomes, cycle time, and risk exposure.

The AI workforce model I build (three layers)

For an AI operating model that scales, I use three layers and I embed them early, not “after the pilot.”

- Business product owners: define the workflow, value, and adoption plan.

- Technical builders (data/ML): design data pipelines, models, and evaluation.

- Risk/legal partners: privacy, IP, compliance, and model governance from day one.

Meeting design is the real operating system

Operating model skills show up in calendars. I reduce status meetings and replace them with tighter forums:

- Decision logs: what we decided, why, and what data we used.

- Experiment reviews: what we tested, what failed, and what we learned.

- Guardrail checks: bias, security, and human review coverage.

Trust drives adoption (or silent failure)

Culture matters more than most leaders admit. If people fear being replaced, they will quietly sandbag the rollout: slow feedback, low usage, and “forgotten” edge cases. I name this risk early and treat it like any other delivery blocker.

“Adoption is a trust problem before it is a tooling problem.”

One of the best adoption wins I saw came after we rewrote a team’s performance agreements to include human-in-the-loop quality checks. We made it clear that using AI did not remove accountability; it changed the work: review, escalate, and improve the system.

6) Scale-Through-Delivery: MLOps Security and “Shipping Without Panic”

In my experience, the end-to-end AI approach rarely breaks in ideation. It breaks at the last mile: deployment, monitoring, and security. That’s where trust is won or lost. If leaders want reliable AI in 2026, we have to treat MLOps as a delivery system, not a side project.

The MLOps security basics I insist on

I keep the basics simple and non-negotiable, especially for GenAI where prompts behave like code and data combined:

- Model registry: one approved place to store models, with clear ownership and status (dev, staging, prod).

- Audit trails: who trained, approved, deployed, and accessed the model—and when.

- Prompt/version control (GenAI): prompts, tools, and system instructions must be versioned like software.

- Access management: least privilege, strong secrets handling, and role-based access for data, models, and endpoints.

- Incident response: a playbook for model rollback, key rotation, and customer communication.

Continuous measurement: outcomes + model health

I don’t accept “the model is accurate” as a success metric. I track business outcomes alongside model metrics, so we can ship without panic and still stay accountable.

| Business | Model/Ops |

|---|---|

| Conversion lift, time saved, fraud reduced | Drift, latency, cost per transaction |

External collaborators: align incentives to impact

Strategic partners can help us scale faster, but I prefer outcome-based gain-sharing. When everyone is paid for impact, not tickets closed, the work stays focused on real value and safer delivery.

Scenario: your best model gets copied into shadow IT

Imagine our top-performing model is exported and embedded into an unapproved tool by a well-meaning team. Now we have unknown prompts, unknown data flows, and no audit trail. If it leaks sensitive data or gives harmful advice, leadership still owns the risk. This is exactly where governance and MLOps meet: approved registries, controlled access, and monitoring that detects “unknown deployments” before they become public incidents.

7) Conclusion: The Sticky-Note Strategy (and My One-Line Rule)

When I step back and look at every AI program that worked (and the ones that didn’t), I keep coming back to a simple “sticky-note strategy.” I write five words on one note and place it where leaders can’t ignore it: governance, data, use cases, skills, delivery. In this Leadership AI Strategy Guide for 2026, those five pillars are the system. If one is missing, the whole thing wobbles. Great models without governance create risk. Strong governance without delivery creates frustration. Clean data without clear use cases becomes an expensive hobby.

To keep myself honest, I use one rule before anything ships. I say it out loud in the room, because it cuts through noise and speed-talk:

“If no one can name the owner, the risk decision, and the KPI, it’s not ready to ship.”

This is the practical bridge between AI ambition and real business value. It also matches what I’ve learned from The Complete Leadership AI Strategy Guideundefined: AI business transformation is not a one-time project. It is a capability. That means you don’t “finish” it—you build it, run it, and improve it. Keep the federated governance model so teams can move fast with clear guardrails. Then keep measuring outcomes quarterly, not yearly, so you can adjust before small issues become big ones.

If you want a next step that is simple and real, run a 2-hour workshop. Bring business, IT, data, risk, and frontline leaders into one room. Map your top 10 AI use cases against the five pillars. You will quickly see which ideas are ready, which are blocked, and which are just not worth it. Then pick two to ship in the next 90 days, with named owners, clear risk calls, and measurable KPIs.

And I’ll end with the human part: the goal isn’t perfect AI. The goal is better work, done with trust.

TL;DR: In 2026, winning AI leaders treat AI as a capability (not a project): use a federated AI governance model with Responsible AI principles, prioritize high-ROI use cases, invest in data platform readiness + MLOps security, build operating model skills, and measure business outcomes continuously.