I didn’t become an “AI person” because I love models—I became one the week our ops team spent three days chasing an invoice exception that should’ve taken three minutes. That tiny fire drill made something painfully clear: operations is where AI either becomes a quiet productivity engine… or another dashboard nobody opens. In this guide, I’m going to lay out the complete operations AI strategy I wish I’d had then: not a shiny vision deck, but a blueprint you can run—use cases, ROI, governance, data foundation, and the execution discipline to ship AI workers that move real KPIs.

1) Why AI becoming operations priority (and why I agree)

A quick reality check: ops has the highest upside

In The Complete Operations AI Strategy Guide, the message is simple: if you want fast, provable value from AI, start where work is high-volume, process-driven, and painfully measurable. That is operations. Ops teams live in repeatable steps, clear handoffs, and constant deadlines. That makes AI in operations less “science project” and more “measurable improvement.”

My “ops pain” story: exceptions, rework, and swivel-chair work

I used to think our biggest problem was speed. It wasn’t. It was exceptions. Every exception created rework: someone copied data from one system to another, chased an approval in chat, then updated a spreadsheet “just in case.” That hidden swivel-chair workflow cost us hours, but it never showed up as a line item. It showed up as late shipments, stressed teams, and leaders asking, “Why can’t we see what’s happening?”

Where AI transforming operational excellence shows up first

When AI becomes an operations priority, I see early wins in three places:

- Cycle time: fewer handoffs, faster triage, and better routing of work.

- Forecast accuracy: cleaner demand signals and smarter exception handling.

- Visibility: real-time status, clearer bottlenecks, and fewer “where is it?” meetings.

These are not vague benefits. They map to metrics ops already tracks, which is why AI strategy for operations can move quickly.

A small tangent: why I don’t start with a model choice

I don’t begin with “Which model should we use?” because that question skips the hard part: defining the workflow, the data, and the decision points. Starting with the process is freeing. It keeps the focus on shipped wins, not demos.

Wild-card analogy: AI as a new shift supervisor

AI is like a new shift supervisor—helpful only if it knows the SOPs and can clock results.

If it can’t follow the standard work, log actions, and improve KPIs, it’s not operational excellence. It’s just noise.

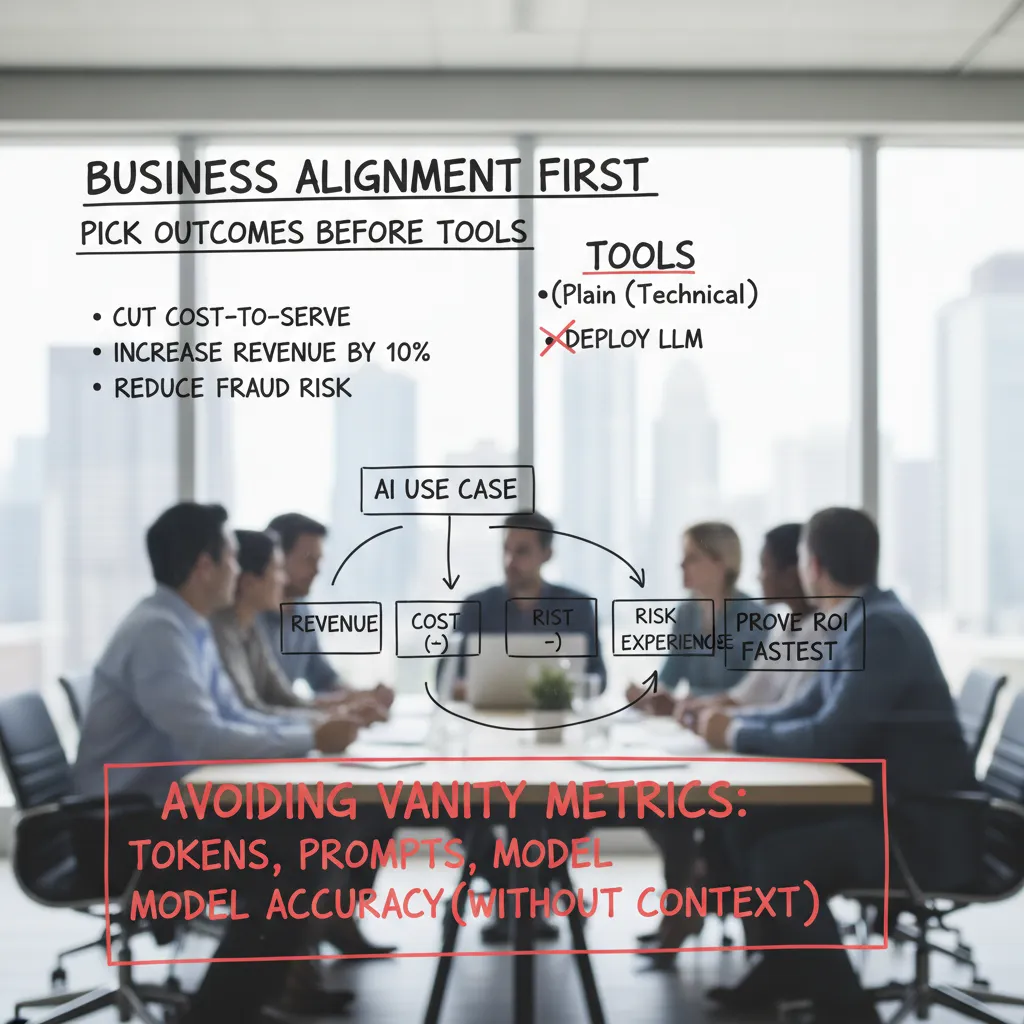

2) Business Alignment First: pick outcomes before tools

When I build an operations AI strategy, I start with the business outcome, not the tool. In plain language, “cut cost-to-serve” beats “deploy an LLM” every time. Tools change fast. Outcomes stay stable. If we can’t say what will improve on the P&L or customer experience, we’re not doing strategy—we’re shopping.

Write outcomes in plain language

I force myself to write goals the way a CFO or ops lead would say them:

- Reduce average handle time by 15%

- Lower chargebacks and disputes

- Increase on-time shipments

- Improve collections recovery rate

Notice none of these require naming a model. AI is the means, not the headline.

Measuring AI ROI: map value before you build

For each AI use case, I map impact to four buckets—then pick the one I can prove fastest:

- Revenue: upsell, retention, faster cash collection

- Cost: fewer touches, less rework, lower vendor spend

- Risk: fewer errors, better compliance, reduced fraud

- Experience: faster response, fewer escalations, higher CSAT

Avoid vanity metrics (my pet peeve)

I don’t want dashboards full of tokens, prompts, or model accuracy without context. Those are engineering stats, not business proof. If accuracy goes up but refunds don’t go down, we didn’t win.

A simple KPI stack I’ve used

This keeps everyone aligned from execs to builders:

- Business KPI (e.g., cost-to-serve)

- Operational KPI (e.g., contacts per order)

- Leading indicator (e.g., % deflected tickets)

- Model/service metric (e.g., precision on intent routing)

Mini hypothetical: collections outreach

If AI improves collections outreach, I look for 30–60 day P&L movement like: higher cash collected, lower DSO, fewer agency fees, and reduced write-offs. That’s the story that gets an operations AI strategy funded—and shipped.

3) How to select AI use cases (I use a “rework radar”)

When teams ask me where to start with an Operations AI strategy, I don’t begin with models. I begin with rework. My “rework radar” is a simple filter: I look for rules + repetition + rework. When all three show up, ROI usually follows because AI can remove busywork, reduce errors, and speed up cycle time without changing the whole business.

The trifecta: rules + repetition + rework

- Rules: there’s a clear “if this, then that” logic (even if it’s messy today).

- Repetition: the task happens daily or weekly, not once a quarter.

- Rework: people fix the same issues again and again (handoffs, missing fields, unclear requests).

Use cases that show up everywhere

Across the playbooks in The Complete Operations AI Strategy Guide, the same high-leverage patterns repeat. My shortlist usually includes:

- Support triage: classify, route, and draft responses for common tickets.

- Quote-to-cash exceptions: flag pricing, terms, or approval anomalies before they stall deals.

- Collections outreach: prioritize accounts and generate compliant follow-ups.

- Recruiting screening: summarize resumes, map skills, and standardize first-pass notes.

- Marketing content operations: briefs, repurposing, QA checks, and asset tagging.

My slightly biased scoring matrix

I score candidates quickly so we stop debating and start shipping. Here’s the matrix I use:

| Factor | What I’m asking |

|---|---|

| Value | Will it move one KPI (time, cost, revenue, risk)? |

| Feasibility | Can we build it in weeks, not quarters? |

| Data readiness | Do we have clean inputs and feedback signals? |

| Integration complexity | How many systems and APIs are involved? |

| Change pain | How much behavior change is required? |

Quick wins: small surface area, not small impact

A quick win doesn’t mean tiny. I aim for a workflow slice that touches one KPI and one team first—like “reduce first-response time in support” or “cut invoice exception handling time in AR.”

Informal rule: if a use case needs five approvals before lunch, it’s not a pilot—it’s a political thriller.

4) Unified Data Foundation: the unsexy hero of scale

In every “Operations AI Strategy” I’ve worked on, the biggest determinant of enterprise AI success is still the same: data platform readiness. Not model choice. Not prompts. Not a flashy demo. If the data foundation is shaky, AI turns into a string of one-off pilots that never ship.

What “good data” means in ops

For operations, “good data” is not a vibe—it’s a set of traits we can test and own:

- Quality: correct, complete, timely, and consistent (same customer, same ID, everywhere).

- Accessibility: the right people and services can read it fast, with clear permissions.

- Integration: shared keys and definitions across systems, not “best effort” joins.

- Governance: clear owners, change control, and someone gets paged when it breaks.

I like to make ownership explicit with a simple table:

| Data asset | Owner | On-call when broken |

|---|---|---|

| Order status events | Supply Chain Ops | Data Platform + Ops |

| Customer master | CRM Admin | RevOps |

Integration: plug into the systems that run the business

AI in operations must connect to the systems of record and the systems of work: ERP, CRM, finance, supply chain tools, and workflow layers. If it can’t read and write where the business actually runs, you get pilot purgatory: great results in a sandbox, zero adoption in production.

My rule: if a human has to copy-paste between systems, an AI worker will trip too.

Small detour: data contracts beat “please update the spreadsheet” culture

Spreadsheets are fine for exploration, but they fail at scale. I prefer data contracts: clear agreements between producers and consumers about schema, freshness, and meaning.

Example contract checks:

order_idis never null- events arrive within 5 minutes

- status values are from an approved list

5) AI governance framework without killing momentum

In operations, I’ve learned that risk management governance isn’t red tape; it’s how I sleep at night after launch. When an AI workflow touches customers, money, or sensitive data, “move fast” needs a safety net. The goal is not to slow teams down—it’s to keep shipped wins from turning into expensive rollbacks.

My “guardrails, not gates” approach

I use a simple policy that answers one question: what can teams do without asking? I define three lanes:

- Allowed: low-risk use (drafting internal SOPs, summarizing tickets with no PII).

- Reviewed: medium-risk use (customer-facing text, decision support, vendor models touching operational data).

- Prohibited: high-risk use (autonomous refunds, unapproved data exports, training on customer PII).

A lightweight review loop that fits real ops work

From The Complete Operations AI Strategy Guide, I keep governance practical by reviewing only what changes risk. My checklist is short and repeatable:

- Model/vendor risk: what’s the provider’s security posture, retention policy, and SLA?

- Data handling: what data is used, where it flows, and what is logged?

- Monitoring: accuracy, drift, and customer-impact metrics (refund errors, complaint rate).

- Escalation: who gets paged, and how do we pause automation?

- Auditability: keep prompts, outputs, and approvals tied to a ticket ID.

Clear ownership so decisions don’t stall

- Operations product owner: defines use case, KPIs, and acceptable failure modes.

- IT platform team: integrations, access controls, and deployment standards.

- Security: data classification, threat review, incident response.

- Legal/Compliance: customer terms, regulatory needs, record retention.

Wild-card scenario: wrong refund email

If an AI worker emails a customer the wrong refund, my incident playbook is: stop the automation, contain (identify affected customers), correct (send a human-approved follow-up), refund reconciliation, and post-incident review with a rule update (e.g., require human approval above a threshold).

6) Operating Model Skills Change: preparing operations teams scale

In The Complete Operations AI Strategy Guide, the message is clear: you don’t “roll out AI”—you reorganize around it (a little). When I see AI projects stall, it’s rarely because the model is weak. It’s because the operating model still assumes humans do every step, in the same order, with the same checks.

Define the AI workforce model (humans + AI workers)

I treat AI like a new type of teammate: fast, consistent, and sometimes wrong in weird ways. So I define a simple workforce model with clear handoffs and oversight.

- AI workers handle repeatable tasks: triage, drafting, classification, routing.

- Humans handle judgment: approvals, exceptions, customer empathy, risk calls.

- Oversight is explicit: who reviews, when, and what “good” looks like.

Training that actually sticks

Training can’t be a one-time webinar. I focus on four skills that show up in daily operations AI work:

- AI literacy: what AI can/can’t do, and how errors happen.

- Prompt hygiene: clean inputs, clear constraints, and no sensitive data leaks.

- Exception handling: what to do when confidence is low or outputs conflict.

- KPI ownership: each team owns the metric the AI touches (speed, quality, cost).

Even a small standard helps, like:

When in doubt: verify sources, log the case, escalate to human review.

Governance: steering, sponsor, and product owners

To scale, I set up a cross-functional steering group with an exec sponsor who can unblock policy and budget. Then I assign product owners in each function (Support, Finance, Ops) to manage the backlog, adoption, and KPI impact.

Confession: the hardest part isn’t the tech—it’s getting everyone to trust the new workflow on a Tuesday at 4pm.

That’s why I bake trust into the operating model: visible QA, clear escalation paths, and shared dashboards that prove the AI is helping—not hiding problems.

7) Execution discipline determines success: ship, monitor, improve

The biggest shift I made in operations AI was simple: I stopped treating AI like a one-time project and started treating it like a product. That means scale-through-delivery. I plan releases, ship small improvements, and keep a clear backlog. In an Operations AI Strategy, the work is not “done” when the model looks good in a demo. It is done when the change survives real volume, real edge cases, and real users.

Delivery also forces me to get serious about MLOps security deployment. In ops, trust is everything, so I build monitoring, versioning, access controls, and audit trails that match how the business actually runs. I want to know what model version made a decision, who approved it, what data it used, and what happened after. When something breaks, I need fast rollback, not a long investigation.

AI adoption at scale only happens when AI workers run end-to-end process automation inside real tools. If the “AI” lives in a separate dashboard, it becomes optional. I embed it where work already happens: ticketing systems, ERP screens, inbox triage, QA checklists, and routing rules. That is how we move from chaos to shipped wins—by making the AI part of the process, not a side experiment.

From there, I run a continuous improvement loop: measure → learn → tweak workflows → retrain → redeploy. I track a few operational KPIs (cycle time, error rate, cost per case, SLA misses) and connect them to model signals (confidence, drift, escalation rate). When metrics slip, I adjust prompts, update rules, improve training data, or change the handoff between humans and automation.

My closing thought is that the best ops AI strategy is boring in the best way. It shows up in weekly KPI reviews, release notes, and steady reliability gains. If we can ship, monitor, and improve with discipline, the wins compound—and the chaos fades.

TL;DR: Operations is leading AI adoption in 2026 because it’s packed with repeatable work. Start with measurable business outcomes (not vanity metrics), build a unified data foundation, prioritize high-ROI use cases, set up an AI governance framework, and ship AI workers through disciplined delivery (MLOps + security) that plugs into ERP/CRM/workflows.